templateEnsemble

Ensemble learning template

Syntax

Description

t = templateEnsemble(Method,NLearn,Learners)Method, NLearn learning cycles, and

weak learners Learners.

All other options of the template (t) specific

to ensemble learning appear empty, but the software uses their corresponding

default values during training.

t = templateEnsemble(Method,NLearn,Learners,Name,Value)

For example, you can specify the number of predictors in each

random subspace learner, learning rate for shrinkage, or the target

classification error for RobustBoost.

If you display t in the Command Window, then

all options appear empty ([]), except those options

that you specify using name-value pair arguments. During training,

the software uses default values for empty options.

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Tips

NLearncan vary from a few dozen to a few thousand. Usually, an ensemble with good predictive power requires from a few hundred to a few thousand weak learners. However, you do not have to train an ensemble for that many cycles at once. You can start by growing a few dozen learners, inspect the ensemble performance and then, if necessary, train more weak learners usingresumefor classification problems, orresumefor regression problems.Ensemble performance depends on the ensemble setting and the setting of the weak learners. That is, if you specify weak learners with default parameters, then the ensemble can perform. Therefore, like ensemble settings, it is good practice to adjust the parameters of the weak learners using templates, and to choose values that minimize generalization error.

If you specify to resample using

Resample, then it is good practice to resample to entire data set. That is, use the default setting of1forFResample.In classification problems (that is,

Typeis'classification'):If the ensemble aggregation method (

Method) is'bag'and:The misclassification cost is highly imbalanced, then, for in-bag samples, the software oversamples unique observations from the class that has a large penalty.

The class prior probabilities are highly skewed, the software oversamples unique observations from the class that has a large prior probability.

For smaller sample sizes, these combinations can result in a very low relative frequency of out-of-bag observations from the class that has a large penalty or prior probability. Consequently, the estimated out-of-bag error is highly variable and it might be difficult to interpret. To avoid large estimated out-of-bag error variances, particularly for small sample sizes, set a more balanced misclassification cost matrix using the

Costname-value pair argument of the fitting function, or a less skewed prior probability vector usingPriorname-value pair argument of the fitting function.Because the order of some input and output arguments correspond to the distinct classes in the training data, it is good practice to specify the class order using the

ClassNamesname-value pair argument of the fitting function.To quickly determine the class order, remove all observations from the training data that are unclassified (that is, have a missing label), obtain and display an array of all the distinct classes, and then specify the array for

ClassNames. For example, suppose the response variable (Y) is a cell array of labels. This code specifies the class order in the variableclassNames.Ycat = categorical(Y); classNames = categories(Ycat)

categoricalassigns<undefined>to unclassified observations andcategoriesexcludes<undefined>from its output. Therefore, if you use this code for cell arrays of labels or similar code for categorical arrays, then you do not have to remove observations with missing labels to obtain a list of the distinct classes.To specify that order should be from lowest-represented label to most-represented, then quickly determine the class order (as in the previous bullet), but arrange the classes in the list by frequency before passing the list to

ClassNames. Following from the previous example, this code specifies the class order from lowest- to most-represented inclassNamesLH.Ycat = categorical(Y); classNames = categories(Ycat); freq = countcats(Ycat); [~,idx] = sort(freq); classNamesLH = classNames(idx);

Algorithms

For details of ensemble aggregation algorithms, see Ensemble Algorithms.

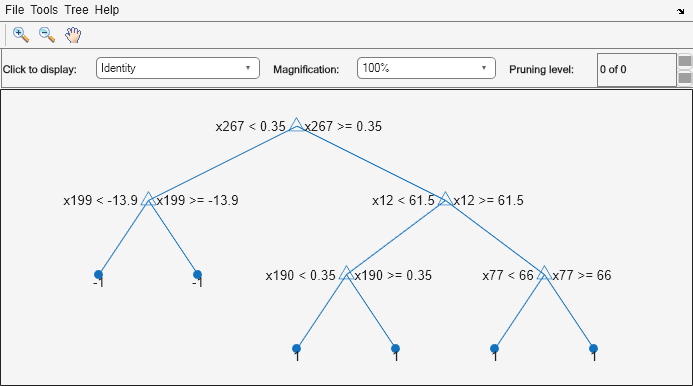

If you specify

Methodto be a boosting algorithm andLearnersto be decision trees, then the software grows stumps by default. A decision stump is one root node connected to two terminal, leaf nodes. You can adjust tree depth by specifying theMaxNumSplits,MinLeafSize, andMinParentSizename-value pair arguments usingtemplateTree.The software generates in-bag samples by oversampling classes with large misclassification costs and undersampling classes with small misclassification costs. Consequently, out-of-bag samples have fewer observations from classes with large misclassification costs and more observations from classes with small misclassification costs. If you train a classification ensemble using a small data set and a highly skewed cost matrix, then the number of out-of-bag observations per class might be very low. Therefore, the estimated out-of-bag error can have a large variance and might be difficult to interpret. The same phenomenon can occur for classes with large prior probabilities.

For the RUSBoost ensemble aggregation method (

Method), the name-value pair argumentRatioToSmallestspecifies the sampling proportion for each class with respect to the lowest-represented class. For example, suppose that there are 2 classes in the training data, A and B. A have 100 observations and B have 10 observations. Also, suppose that the lowest-represented class hasmobservations in the training data.If you set

'RatioToSmallest',2, thens*m2*10=20. Consequently, the software trains every learner using 20 observations from class A and 20 observations from class B. If you set'RatioToSmallest',[2 2], then you will obtain the same result.If you set

'RatioToSmallest',[2,1], thens1*m2*10=20ands2*m1*10=10. Consequently, the software trains every learner using 20 observations from class A and 10 observations from class B.

For ensembles of decision trees, and for dual-core systems and above,

fitcensembleandfitrensembleparallelize training using Intel® Threading Building Blocks (TBB). For details on Intel TBB, see https://www.intel.com/content/www/us/en/developer/tools/oneapi/onetbb.html.

References

[1] Freund, Y. “A more robust boosting algorithm.” arXiv:0905.2138v1, 2009.

Version History

Introduced in R2014b