Experiment Manager

Create and run experiments to train and compare deep learning networks

Description

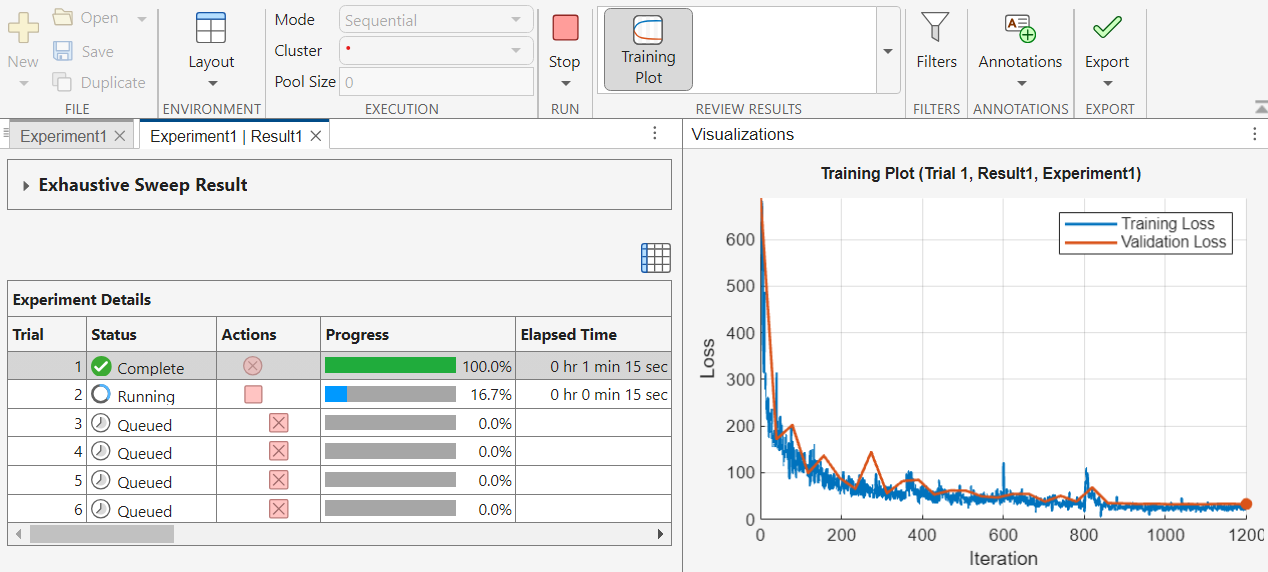

You can use the Experiment Manager app to create deep learning experiments to train networks under different training conditions and compare the results. For example, you can use Experiment Manager to:

Sweep through a range of hyperparameter values, use Bayesian optimization to find optimal training options, or randomly sample hyperparameter values from probability distributions. The Bayesian optimization and random sampling strategies require Statistics and Machine Learning Toolbox™.

Use the built-in function

trainnetor define your own custom training function.Compare the results of using different data sets or test different deep network architectures.

To set up your experiment quickly, you can start with a preconfigured template. The experiment templates support workflows that include image classification and regression, sequence classification, audio classification, signal processing, semantic segmentation, and custom training loops.

The Experiment Browser panel displays the hierarchy of experiments and results in a project. The icon next to the experiment name indicates its type.

— Built-in training experiment that uses the

— Built-in training experiment that uses the

trainnettraining function — Custom training experiment that uses a custom

training function

— Custom training experiment that uses a custom

training function — General-purpose experiment that uses a user-authored

experiment function

— General-purpose experiment that uses a user-authored

experiment function

This page contains information about built-in and custom training experiments for Deep Learning Toolbox™. For general information about using the app, see Experiment Manager. For information about using Experiment Manager with the Classification Learner and Regression Learner apps, see Experiment Manager (Statistics and Machine Learning Toolbox).

Required Products

Use Deep Learning Toolbox to run built-in or custom training experiments for deep learning and to view confusion matrices for these experiments.

Use Statistics and Machine Learning Toolbox to run custom training experiments for machine learning and experiments that use Bayesian optimization.

Use Parallel Computing Toolbox™ to run multiple trials at the same time or a single trial on multiple GPUs, on a cluster, or in the cloud. For more information, see Run Experiments in Parallel.

Use MATLAB® Parallel Server™ to offload experiments as batch jobs in a remote cluster. For more information, see Offload Experiments as Batch Jobs to a Cluster.

Open the Experiment Manager App

MATLAB Toolstrip: On the Apps tab, under MATLAB, click the Experiment Manager icon.

MATLAB command prompt: Enter

experimentManager.

For general information about using the app, see Experiment Manager.

Examples

Related Examples

- Compare Classification Network Architectures Using Experiment

- Compare Dropout Probabilities and Filter Configurations for Image Regression Using Experiment

- Evaluate Deep Learning Experiments by Using Metric Functions

- Tune Experiment Hyperparameters by Using Bayesian Optimization

- Use Bayesian Optimization in Custom Training Experiments

- Try Multiple Pretrained Networks for Transfer Learning

- Experiment with Weight Initializers for Transfer Learning

- Audio Transfer Learning Using Experiment Manager

- Choose Training Configurations for LSTM Using Bayesian Optimization

- Run a Custom Training Experiment for Image Comparison

- Use Experiment Manager to Train Generative Adversarial Networks (GANs)

- Custom Training with Multiple GPUs in Experiment Manager

More About

Tips

To visualize and build a network, use the Deep Network Designer app.

To reduce the size of your experiments, discard the results and visualizations of any trial that is no longer relevant. In the Actions column of the results table, click the Discard button

for the trial.

for the trial.In your setup function, access the hyperparameter values using dot notation. For more information, see Structure Arrays.

For networks containing batch normalization layers, if the

BatchNormalizationStatisticstraining option ispopulation, Experiment Manager displays final validation metric values that are often different from the validation metrics evaluated during training. The difference in values is the result of additional operations performed after the network finishes training. For more information, see Batch Normalization Layer.

Version History

Introduced in R2020aSee Also

Apps

- Experiment Manager | Experiment Manager (Statistics and Machine Learning Toolbox) | Deep Network Designer