AI-Native Fully Convolutional Receiver

This example trains and evaluates an AI-native, fully convolutional receiver known as DeepRx [1]. With DeepRx, you replace channel estimation, equalization, and symbol demodulation with a deep convolutional neural network (DCNN) on the receive side of a 5G New Radio (NR) link. DeepRx outperforms the conventional 5G receiver under high Doppler shift and sparse pilot (DM-RS) configurations.

The example builds an end-to-end physical uplink shared channel (PUSCH) link—transmitter, channel, and receiver—to generate training, validation, and test data. It then evaluates DeepRx and compares its performance with a conventional receiver.

Introduction

AI-native air interface (AI-AI) integrates AI/ML into physical layer (PHY) processing to improve system performance and reduce energy consumption for networks beyond 5G. This approach departs from the traditional design-and-productization flow by making AI/ML a core PHY component [2, 3].

The 3rd Generation Partnership Project (3GPP) defines AI/ML applications for channel state information (CSI) compression, beam management, and positioning. For related workflows, see Train Autoencoders for CSI Feedback Compression, Neural Network for Beam Selection, and AI for Positioning Accuracy Enhancement. In Release 19, 3GPP continues work on beam management and positioning and provides specification support [4, 5].

This example implements an AI-AI receiver that replaces several 5G receiver blocks with AI/ML counterparts to improve coded bit-error rate (BER) and throughput. For a PyTorch™ coexecution testbench that verifies an AI-native receiver, see Verify Performance of 6G AI-Native Receiver Using MATLAB and PyTorch Coexecution.

System Description

This example adapts the NR PUSCH Throughput example to generate training, validation, and test data for an online-learning workflow. In online learning, the network trains and validates on a continuous stream of data. Batch learning that stores most of the dataset in memory might be impractical due to memory and storage limits.

The example generates mini-batches in parallel on CPU workers in the background. The training algorithm retrieves one mini-batch at a time and trains the neural network on the GPU. While the network trains on the GPU in the foreground, the CPU workers continue to generate data in the background. Background generation frees the GPU to focus on training.

With parallel execution enabled, the example keeps only one mini-batch in memory for each training, validation, and test iteration. For details on the parallel setup and data pipeline, see Train DeepRx Network section.

For each signal-to-noise ratio (SNR) point, simulate a PUSCH transmission and process the received signal as follows:

Generate an OFDM-modulated waveform that includes PUSCH and PUSCH demodulation reference signals (DM-RS).

Pass the waveform through a clustered delay line (CDL) or tapped delay line (TDL) fading channel, and add additive white Gaussian noise (AWGN) at the target SNR.

Synchronize the received waveform and OFDM-demodulate it to a resource grid.

Estimate the channel:

Perfect estimation: reconstruct the channel impulse response (CIR) and convert it to frequency-domain channel estimates.

Practical estimation: estimate the channel from the PUSCH DM-RS.

Extract the PUSCH resource elements and corresponding channel estimates. Equalize the PUSCH symbols using a minimum mean-square error (MMSE) equalizer.

Demodulate and descramble the equalized symbols to estimate the codewords.

Decode the uplink shared channel (UL-SCH): process soft bits, decode the codeword, and compute the block cyclic redundancy check (CRC).

Use the soft bits and transport blocks to compute the throughput and coded bit error rate (BER).

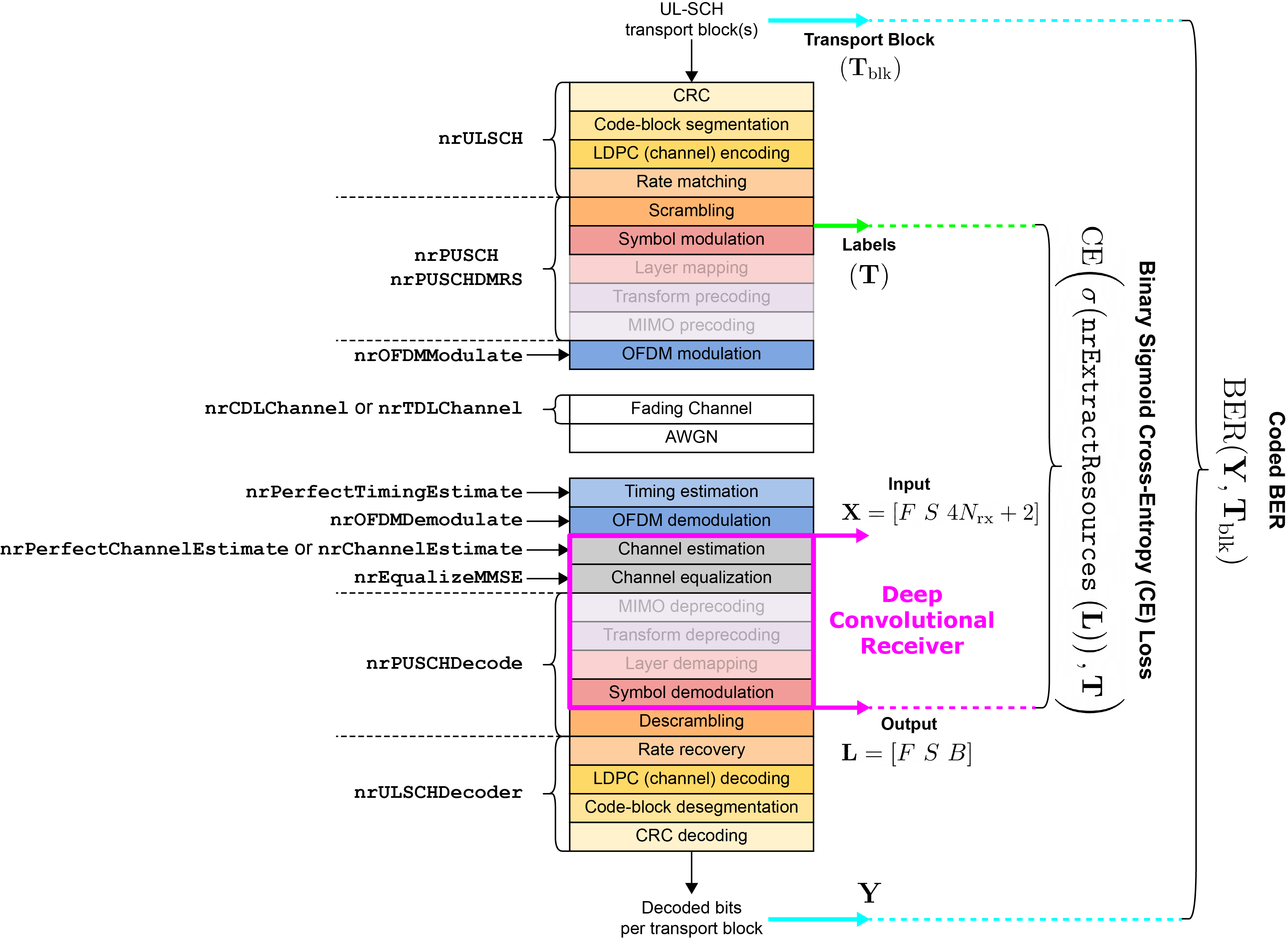

The diagram maps each PUSCH processing step to the corresponding 5G Toolbox™ functions. Faded blocks indicate operations that this example omits because it uses a SIMO configuration (1 transmit antenna, 2 receive antennas).

DeepRx replaces channel estimation, channel equalization, and symbol demodulation. Accordingly, represents the number of subcarriers, denotes the number of OFDM symbols in time, and the number of receive antennas. During training, the loss is binary cross-entropy (BCE) with a sigmoid output, computed between the predicted bit log-likelihood ratios (LLRs) and the ground truth labels . For evaluation, the example uses transport blocks () and the number of block errors to compute coded BER and throughput. Hybrid automatic repeat request (HARQ) is disabled because it affects only the processing after LLR computation and does not change the DeepRx-estimated LLRs.

Configure System Parameters

Set the simulation length by specifying the number of 10 ms frames (simParameters.NFrames) and the number of training samples (simParameters.NTrainSamples). The SNR is defined per resource element (RE) and per antenna element. For details on the SNR definition used in this example, see SNR Definition Used in Link Simulations.

Specify the carrier, UL-SCH, and PUSCH configurations and the propagation channel model parameters.

Training the DeepRx network can take several hours because the training set spans a wide range of scenario parameters to improve generalization. By default, training is disabled; the example loads a pretrained network (DeepRx_2M.mat) (requires Deep Learning Toolbox™). This network was trained with the example’s default settings and simParameters.NTrainSamples = 2e6. To enable training, set simParameters.TrainNow to true.

Generate the simulation data for both training and testing simultaneously on multiple workers across multiple CPUs. To enable parallel execution, set simParameters.UseParallel to true (requires Parallel Computing Toolbox™). If a supported GPU is available, DeepRx network training runs on the GPU (requires Parallel Computing Toolbox™).

% Configure UL PUSCH simulation parameters for training, validation, and % testing simParameters.TrainNow =false; % If true, train the network; otherwise, use a pretrained network simParameters.UseParallel =

false; % If true, enable parallel processing; otherwise, execute serially simParameters.LearnRate =

0.001; % Initial learning rate for training simParameters.NTrainSamples =

30e3; % Number of training iterations simParameters.NFrames =

1; % Number of frames used in each training iteration % Generic UL PUSCH simulation parameters (fixed during training) simParameters.CarrierFrequency = 3.5e9; % Carrier frequency (Hz) simParameters.NSizeGrid = 26; % Bandwidth in resource blocks (26 RBs at 15 kHz SCS) simParameters.SubcarrierSpacing = 15; % Subcarrier spacing (kHz) simParameters.ModulationType = '16QAM'; % Supported modulation types — pi/2-BPSK, QPSK, 16QAM, 64QAM, 256QAM simParameters.CodeRate = 658/1024; % Code rate for transport block size calculation

The example randomizes training parameters within specified ranges to help DeepRx generalize across channel and simulation conditions, such as SNR, delay spread, maximum Doppler shift, and DM-RS settings.

% Randomized UL PUSCH parameters (selected within range; used only during % training) simParameters.SNRInLimits = [-4 32]; % SNR limits for uniform distribution [min max] in dB simParameters.DelaySpreadLimits = [10e-9 300e-9]; % RMS delay spread range [10 300] ns for uniform distribution simParameters.MaximumDopplerShiftLimits = [0 500]; % Maximum Doppler shift range [0 500] Hz for uniform distribution simParameters.DMRSAdditionalPositionLimits = [0 1]; % DMRS additional position limits (0 or 1) simParameters.DMRSConfigurationTypeLimits = [1 2]; % DMRS configuration type limits (1 or 2) simParameters.ChannelTrain = ["CDL-B","CDL-C","CDL-D","TDL-B","TDL-C","TDL-D"]; % Channel delay profiles for training simParameters.ChannelValidate = ["CDL-A","CDL-E","TDL-A","TDL-E"]; % Channel delay profiles for validation % Additional UL PUSCH simulation parameters simParameters = hConfigureSimulationParameters(simParameters); % AI/ML parameters simParameters = configureAIMLParameters(simParameters); % Display the simulation parameter summary displayParameterSummary(simParameters);

________________________________________ Simulation Summary ________________________________________ - Input (X) size: [ 312 14 10 ] - Output (L) size: [ 312 14 4 ] - Number of subcarriers (F): 312 - Number of symbols (S): 14 - Number of receive antennas (Nrx): 2

Load or Create DeepRx Network

DeepRx is a ResNet-based deep convolutional neural network (DCNN) receiver. This section shows how to design and train a ResNet-based convolutional receiver to detect and demodulate OFDM waveforms.

The example behavior depends on simParameters.TrainNow:

true— The example is in training mode. You construct the network and train it from scratch.false— The example is in simulation mode. You load the pretrained network (DeepRx_2M.mat) and evaluate detection performance on the generated test data.

DCNNs learn distinctive features from images. In this example, a DCNN operates on the received time-frequency resource grid using 2-D convolutions to detect transmitted bits. Very deep networks can suffer from degradation—accuracy saturates and training error increases as layers are added. DeepRx addresses degradation with residual blocks that add skip connections to improve gradient flow to earlier layers [6, 7]. To reduce computation, each residual block uses 2-D depthwise separable convolutions [8].

Construct the network input as follows:

Frequency-domain received resource grid (

rxGrid) — complex-valued array of size -by--by-.Transmit DM-RS grid (

dmrsGrid) — complex-valued array of size -by--by-.Raw channel estimate grid (

rawChanEstGrid) — complex-valued array of size -by--by-.

Concatenate rxGrid, dmrsGrid, and rawChanEstGrid along the third (channel) dimension (depth-wise) to form a complex-valued array of size -by--, where .

Convert to a real-valued array of size -by--by- by stacking the real and imaginary parts along the channel dimension.

The example also prepares two label sequences: trBlk (bits before LDPC encoding, rate matching, and scrambling) and codedTrBlock (bits after those operations).

if simParameters.TrainNow % Create DeepRx architecture for training [net,netParam] = hCreateDeepRx(simParameters.InputSize,simParameters.NBits,InputNorm="none"); else % Load pretrained DeepRx network: prefer custom-trained, otherwise use % default if exist("trained_DeepRx.mat","file") data = load("trained_DeepRx.mat"); % Load custom-trained network elseif exist("DeepRx_2M.mat","file") data = load("DeepRx_2M.mat"); % Load pretrained network else error("No pretrained network (trained_DeepRx.mat or DeepRx_2M.mat) found in the working folder."); end net = data.net; netParam = data.netParam; end % Display the summary of the network displayNetworkSummary(net,netParam);

________________________________________ Neural Network Summary ________________________________________ - Number of ResNet blocks: 11 - Total number of layers: 46 - Total number of learnables: 1.2325 million

Train DeepRx Network

This example treats receiver-side bit detection as a supervised learning problem. The transmitter generates the bits that serve as labels, so no manual labeling is required. The network input consists of frequency-domain resource grid features, and the labels are the transmitted bits. Generate a training mini-batch with hGeneratePUSCHTrainingBatch.

The network frames bit detection as binary classification per bit. Its output is a soft-bit (LLR) array of size -by--by-, where is the number of bits per symbol (modulation order). Train the model with binary cross-entropy (BCE) and a sigmoid output by comparing the predicted bit LLRs with the ground-truth labels .

Distribute SNR points to all available parallel workers to shorten training time (see Accelerate Link-Level Simulations with Parallel Processing). Generate mini-batches on CPU workers in parallel and stream them to the GPU for DeepRx training (requires Parallel Computing Toolbox™). If no supported GPU device exists, the training runs on the CPU.

If simParameters.TrainNow is true, the example saves the trained network as trained_DeepRx.mat in the current folder. Otherwise, it loads the pretrained network from the file DeepRx_2M.mat.

if simParameters.TrainNow % Train DeepRx network with the specified simulation parameters net = hTrainDeepRx(simParameters,net); % Save trained network and parameters to current folder save("trained_DeepRx.mat","net","netParam"); % Message indicating the trained network was saved successfully disp("Your trained network is saved in the current folder as trained_DeepRx.mat..."); end

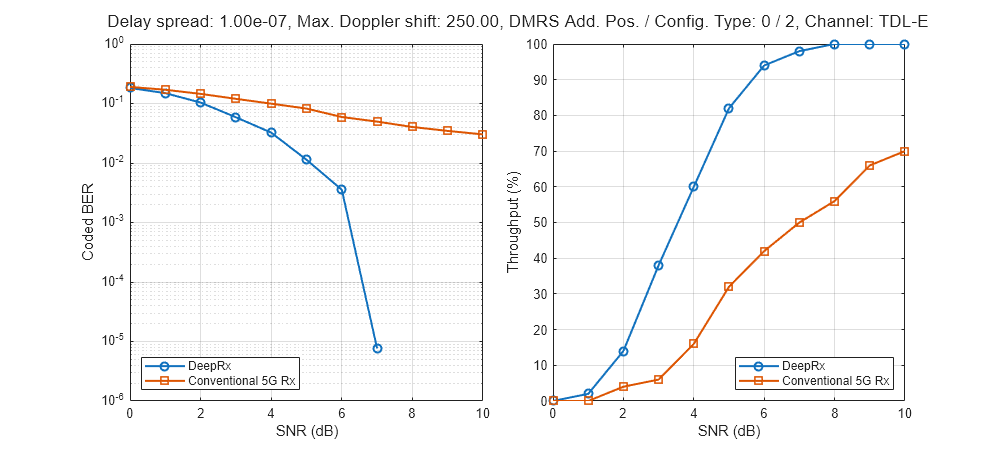

Compare the Performance of DeepRx and Conventional 5G Receivers

In this section, you can compute coded bit-error rate (BER) and throughput for DeepRx and a conventional 5G receiver. The evaluation pipeline recreates the received resource grid as described in Train DeepRx Network section.

Set the test-time modulation with simParameters.ModulationType up to the highest order used during training. The pretrained network DeepRx_2M.mat was trained with 16-QAM, so you can evaluate QPSK or 16QAM.

Control channel estimation and timing synchronization in the conventional receiver with simParameters.PerfectChannelEstimator. Set this flag to true for perfect channel estimation and timing; set it to false for practical estimation and synchronization.

The trained DeepRx network predicts bit log-likelihood ratios (LLRs), and the simulation calculates and plots the coded BER and throughput versus SNR.

The end-to-end simulation function

hCompareReceiverPerformancecomputes coded BER by comparing the transmitted transport block () with the decoded UL-SCH bits vector ().The end-to-end simulation function

hCompareReceiverPerformancealso computes throughput from the number of successful transport blocks and their sizes.

To reduce the total evaluation time, distribute SNR points across available parallel workers by using a parfor loop (requires Parallel Computing Toolbox™). This example runs DeepRx inference on the GPU if one exists; otherwise, it runs on the CPU.

% End-to-end simulation parameters for testing DeepRx and conventional 5G receivers simParameters.ChannelModel ="TDL-E"; % Channel models for testing: CDL-A, CDL-E, TDL-A, TDL-E simParameters.DelaySpread =

100e-9; % Delay spread range [10e-9, 300e-9] seconds simParameters.MaximumDopplerShift =

250; % Maximum Doppler shift range [0, 500] Hz simParameters.SNRInVec =

0:10; % SNR range vector (dB) for evaluation simParameters.ModulationType =

"QPSK"; % Supported modulation types — QPSK or 16QAM simParameters.DMRSAdditionalPosition =

0; % DMRS additional position — 0 or 1 simParameters.DMRSConfigurationType =

2; % DMRS configuration type — 1 or 2 simParameters.NFrames =

5; % Number of frames in simulation simParameters.PerfectChannelEstimator =

false; % Use perfect channel estimation when true % Evaluate link performance with the specified simulation parameters and neural network [resultsRX,resultsAI] = compareReceiverPerformance(simParameters,net);

________________________________________ Simulating DeepRx and 5G receivers ________________________________________ - Test data generation : Serial execution - Inference environment: CPU Simulating DeepRx and conventional receivers for 11 SNR points... SNRIn: +10.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +9.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +8.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +7.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +6.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +5.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +4.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +3.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +2.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +1.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2 SNRIn: +0.00 dB | Channel: TDL-E | Delay spread: 1.00e-07 | Max Doppler shift: 250.00 | DMRS Add. Pos. / Config. Type: 0 / 2

Evaluate Detection Performance

Finally, compare DeepRx with a conventional receiver that uses the nrPUSCHDecode function. For statistically significant results, increase the number of frames (simParameters.NFrames) in the simulation.

% Plot coded BER and throughput (%) versus SNR (dB)

visualizePerformance(simParameters,resultsRX,resultsAI);

References

[1] M. Honkala, D. Korpi, and J. M. J. Huttunen, "DeepRx: Fully Convolutional Deep Learning Receiver," in IEEE Transactions on Wireless Communications, vol. 20, no. 6, pp. 3925—3940, June 2021.

[2] J. Hoydis, F. A. Aoudia, A. Valcarce, and H. Viswanathan, "Toward a 6G AI-Native Air Interface," in IEEE Communications Magazine, vol. 59, no. 5, pp. 76—81, May 2021.

[3] T. O’Shea and J. Hoydis, "An Introduction to Deep Learning for the Physical Layer," in IEEE Transactions on Cognitive Communications and Networking, vol. 3, no. 4, pp. 563—575, Dec. 2017.

[4] 3GPP TR 38.843. "Study on Artificial Intelligence (AI)/Machine Learning (ML) for NR air interface" 3rd Generation Partnership Project; Technical Specification Group Radio Access Network.

[5] 3GPP TSG RAN Meeting #103, Qualcomm, "Revised WID on Artificial Intelligence (AI)/Machine Learning (ML) for NR Air Interface," RP-240774, Maastricht, Netherlands, Mar. 18-21, 2024.

[6] K. He, X. Zhang, S. Ren, and J. Sun, "Deep Residual Learning for Image Recognition," 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 2016, pp. 770—778.

[7] He, K., Zhang, X., Ren, S., and Sun, J., "Identity mappings in deep residual networks", in European Conference on Computer Vision, pp. 630—645, Springer, 2016.

[8] F. Chollet, "Xception: Deep Learning with Depthwise Separable Convolutions," in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 2017 pp. 1800—1807.

Local Functions

function visualizePerformance(simParameters,resultsRX,resultsAI) % Plot coded BER and throughput vs. SNR for MMSE 5G and DeepRx receivers % Create figure for plots f = figure; f.Position(3:4) = [900 400]; t = tiledlayout(1,2,TileSpacing="compact"); % Plot coded BER against SNR (dB) nexttile; semilogy([resultsAI.SNRIn],[resultsAI.CodedBER],'-o',LineWidth=1.5); hold on semilogy([resultsRX.SNRIn],[resultsRX.CodedBER],'-s',LineWidth=1.5,MarkerSize=7); hold off legend("DeepRx","Conventional 5G Rx",Location="southwest"); grid on; xlabel("SNR (dB)"); ylabel("Coded BER"); % Plot throughput vs SNR (dB) nexttile; plot([resultsAI.SNRIn],[resultsAI.PercThroughput],'-o',LineWidth=1.5); hold on plot([resultsRX.SNRIn],[resultsRX.PercThroughput],'-s',LineWidth=1.5,MarkerSize=7); hold off legend("DeepRx","Conventional 5G Rx",Location="southeast"); grid on; xlabel("SNR (dB)"); ylabel("Throughput (%)"); % Add title to tiled layout summarizing system configuration titleText = [displayParameters(simParameters),' | Modulation: ',char(simParameters.ModulationType)]; titleText = split(titleText,'DMRS '); titleText{2} = ['DMRS ',titleText{2}]; title(t,titleText); end function [resultsRX, resultsAI] = compareReceiverPerformance(simParameters,net) % Evaluate PUSCH link over SNR sweep simParameters.TrainNow = false; displayResults = true; % Preallocate output struct arrays (one element per SNR point) resultsAI(1:length(simParameters.SNRInVec)) = ... struct( ... 'SNRIn', -1, ... 'BLER', -1, ... 'UncodedBER', -1, ... 'CodedBER', -1, ... 'SimThroughput', -1, ... 'MaxThroughput', -1, ... 'PercThroughput', -1 ... ); resultsRX(1:length(simParameters.SNRInVec)) = ... struct( ... 'SNRIn', -1, ... 'BLER', -1, ... 'UncodedBER', -1, ... 'CodedBER', -1, ... 'SimThroughput', -1, ... 'MaxThroughput', -1, ... 'PercThroughput', -1 ... ); % Simulation status border = repmat('_',1,40); fprintf('\n%s\n',border); fprintf('\nSimulating DeepRx and 5G receivers\n'); fprintf('%s\n',border); fprintf('\n'); % ----------------------------------------------------------------- % Evaluate AI/DeepRx and conventional receivers over all SNR points % ----------------------------------------------------------------- if canUseParallelPool && simParameters.UseParallel pool = gcp; maxWorkers = numel(simParameters.SNRInVec); % simulate SNR points on each worker fprintf('- Test data generation : CPU acceleration on (%d) workers\n',pool.NumWorkers); else maxWorkers = 0; % serial execution fprintf('- Test data generation : Serial execution\n'); end % Determine the neural receiver inference environment if canUseGPU device = "GPU"; else device = "CPU"; end fprintf('- Inference environment: %s \n', device); % ----------------------------------------------------------------- % Each worker evaluates one SNR point (if enabled), otherwise serial % execution % ----------------------------------------------------------------- fprintf('\nSimulating DeepRx and conventional receivers for %d SNR points...\n\n',... numel(simParameters.SNRInVec)); parfor(i = 1:numel(simParameters.SNRInVec),maxWorkers) % Temporary parameter definitions for parfor simLocal = simParameters; simLocal.SNRIn = simParameters.SNRInVec(i); % End-to-end PUSCH simulation for each SNR point resultsRX(i) = hEvaluatePUSCHAtSNR(simLocal,Iteration=simLocal.NTrainSamples+i+1); resultsAI(i) = hEvaluatePUSCHAtSNR(simLocal,Net=net,Iteration=simLocal.NTrainSamples+i+1); % Update progress status if displayResults fprintf(['SNRIn: %+6.2f dB | ',displayParameters(simLocal),'\n'],simLocal.SNRIn); end end end function dispText = displayParameters(simParameters) % Display random/selected simulation parameters dispText = sprintf( ... ['Channel: %s | Delay spread: %-7.2e | Max Doppler shift: %-6.2f | ' ... 'DMRS Add. Pos. / Config. Type: %d / %d'], ... simParameters.ChannelModel, ... simParameters.DelaySpread,simParameters.MaximumDopplerShift, ... simParameters.DMRSAdditionalPosition, simParameters.DMRSConfigurationType ... ); end function displayNetworkSummary(net,details) % Display network summary including layers and learnable parameters % Count layers using the LeNet-5 convention: % Convolution, pooling, and fully connected layers are counted. % Activation layers are not counted. numLayers = details.conv + details.conv_sep + details.fc; % Print the network summary border = repmat('_',1,40); fprintf('\n%s\n',border); fprintf('\nNeural Network Summary\n'); fprintf('%s\n\n',border); fprintf('- %-27s %i\n','Number of ResNet blocks:',details.resblock); fprintf('- %-27s %i\n','Total number of layers:',numLayers); % Initialize the network if uninitialized if ~net.Initialized net = init(net); end % Get learnable parameter count by analyzing the network netInfo = analyzeNetwork(net,Plots="none"); numLearnables = sum(netInfo.LayerInfo.NumLearnables)/1e6; % millions fprintf('- %-27s %.4f million\n','Total number of learnables:',numLearnables); end function displayParameterSummary(simParameters) % Display the simulation parameter set border = repmat('_',1,40); fprintf('\n%s\n',border); fprintf('\nSimulation Summary\n'); fprintf('%s\n\n',border); fprintf('- %-33s [ %s ]\n', 'Input (X) size:',num2str(simParameters.InputSize)); fprintf('- %-33s [ %s ]\n\n', 'Output (L) size:',num2str(simParameters.OutputSize)); fprintf('- %-33s %-5d\n', 'Number of subcarriers (F):',simParameters.Carrier.NSizeGrid*12); fprintf('- %-33s %-5d\n','Number of symbols (S):',simParameters.Carrier.SymbolsPerSlot); fprintf('- %-33s %-5d\n','Number of receive antennas (Nrx):',simParameters.NRxAnts); end function simParameters = configureAIMLParameters(simParameters) % Configure AI/ML parameters [~, info, ~] = nrPUSCHIndices(simParameters.Carrier,simParameters.PUSCH); simParameters.NBits = info.G/info.Gd; % Number of bits simParameters.InputSize = [ simParameters.Carrier.NSizeGrid*12, ... simParameters.Carrier.SymbolsPerSlot, ... 4*simParameters.NRxAnts+2 ... ]; % DeepRx network input size [F S 4*Nr+2] simParameters.OutputSize = [ simParameters.Carrier.NSizeGrid*12, ... simParameters.Carrier.SymbolsPerSlot, ... simParameters.NBits ... ]; % DeepRx network output size end