resubLoss

Resubstitution loss for regression tree model

Description

L = resubLoss(

specifies additional options using one or more tree,Name=Value)name-value arguments.

For example, you can specify the loss function, the pruning level, and the tree size that

resubLoss uses to calculate the loss.

[

also returns the standard error of the loss, the number of leaf nodes in the trees of the

pruning sequence, and the best pruning level as defined in the L,SE,Nleaf,BestLevel] = resubLoss(___)TreeSize

name-value argument. By default, BestLevel is the pruning level that

gives the loss within one standard deviation of the minimal loss.

Examples

Load the carsmall data set. Consider Displacement, Horsepower, and Weight as predictors of the response MPG.

load carsmall

X = [Displacement Horsepower Weight];Grow a regression tree using all observations.

Mdl = fitrtree(X,MPG);

Compute the resubstitution MSE.

resubLoss(Mdl)

ans = 4.8952

Unpruned decision trees tend to overfit. One way to balance model complexity and out-of-sample performance is to prune a tree (or restrict its growth) so that in-sample and out-of-sample performance are satisfactory.

Load the carsmall data set. Consider Displacement, Horsepower, and Weight as predictors of the response MPG.

load carsmall

X = [Displacement Horsepower Weight];

Y = MPG;Partition the data into training (50%) and validation (50%) sets.

n = size(X,1); rng(1) % For reproducibility idxTrn = false(n,1); idxTrn(randsample(n,round(0.5*n))) = true; % Training set logical indices idxVal = idxTrn == false; % Validation set logical indices

Grow a regression tree using the training set.

Mdl = fitrtree(X(idxTrn,:),Y(idxTrn));

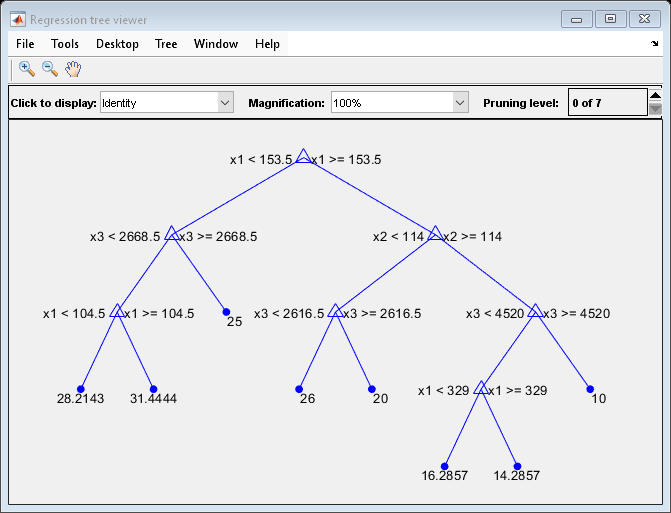

View the regression tree.

view(Mdl,Mode="graph");

The regression tree has seven pruning levels. Level 0 is the full, unpruned tree (as displayed). Level 7 is just the root node (i.e., no splits).

Examine the training sample MSE for each subtree (or pruning level) excluding the highest level.

m = max(Mdl.PruneList) - 1; trnLoss = resubLoss(Mdl,SubTrees=0:m)

trnLoss = 7×1

5.9789

6.2768

6.8316

7.5209

8.3951

10.7452

14.8445

The MSE for the full, unpruned tree is about 6 units.

The MSE for the tree pruned to level 1 is about 6.3 units.

The MSE for the tree pruned to level 6 (i.e., a stump) is about 14.8 units.

Examine the validation sample MSE at each level excluding the highest level.

valLoss = loss(Mdl,X(idxVal,:),Y(idxVal),Subtrees=0:m)

valLoss = 7×1

32.1205

31.5035

32.0541

30.8183

26.3535

30.0137

38.4695

The MSE for the full, unpruned tree (level 0) is about 32.1 units.

The MSE for the tree pruned to level 4 is about 26.4 units.

The MSE for the tree pruned to level 5 is about 30.0 units.

The MSE for the tree pruned to level 6 (i.e., a stump) is about 38.5 units.

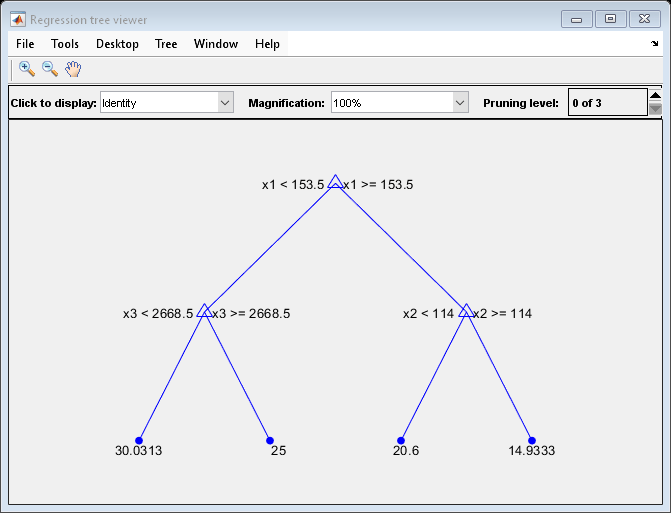

To balance model complexity and out-of-sample performance, consider pruning Mdl to level 4.

pruneMdl = prune(Mdl,Level=4);

view(pruneMdl,Mode="graph")

Input Arguments

Regression tree model, specified as a RegressionTree model object trained with fitrtree.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: L = resubloss(tree,Subtrees="all") prunes all

subtrees.

Loss function, specified as "mse" (mean squared error) or as a

function handle. If you pass a function handle fun, resubLoss calls it as

fun(Y,Yfit,W)

where Y, Yfit, and W are

numeric vectors of the same length.

Yis the observed response.Yfitis the predicted response.Wis the observation weights.

The returned value of fun(Y,Yfit,W) must be a scalar.

Example: LossFun="mse"

Example: LossFun=@Lossfun

Data Types: char | string | function_handle

Pruning level, specified as a vector of nonnegative integers in ascending order or

"all".

If you specify a vector, then all elements must be at least 0 and

at most max(tree.PruneList). 0 indicates the full,

unpruned tree, and max(tree.PruneList) indicates the completely

pruned tree (that is, just the root node).

If you specify "all", then resubLoss

operates on all subtrees, meaning the entire pruning sequence. This specification is

equivalent to using 0:max(tree.PruneList).

resubLoss prunes tree to each level

specified by Subtrees, and then estimates the corresponding output

arguments. The size of Subtrees determines the size of some output

arguments.

For the function to invoke Subtrees, the properties

PruneList and PruneAlpha of

tree must be nonempty. In other words, grow

tree by setting Prune="on" when you use

fitrtree, or by pruning tree using prune.

Example: Subtrees="all"

Data Types: single | double | char | string

Tree size, specified as one of these values:

"se"—resubLossreturns the best pruning level (BestLevel), which corresponds to the highest pruning level with the loss within one standard deviation of the minimum (L+se, whereLandserelate to the smallest value inSubtrees)."min"—resubLossreturns the best pruning level, which corresponds to the element ofSubtreeswith the smallest loss. This element is usually the smallest element ofSubtrees.

Example: TreeSize="min"

Data Types: char | string

Output Arguments

Standard error of loss, returned as a numeric vector of positive values that has the

same length as Subtrees.

Number of leaf nodes in the pruned subtrees, returned as a numeric vector of

nonnegative integers that has the same length as Subtrees. Leaf

nodes are terminal nodes, which give responses, not splits.

Best pruning level, returned as a numeric scalar whose value depends on

TreeSize:

When

TreeSizeis"se", thelossfunction returns the highest pruning level whose loss is within one standard deviation of the minimum (L+se, whereLandserelate to the smallest value inSubtrees).When

TreeSizeis"min", thelossfunction returns the element ofSubtreeswith the smallest loss, usually the smallest element ofSubtrees.

More About

The built-in loss function is "mse", meaning mean squared

error.

To write your own loss function, create a function file of the form

function loss = lossfun(Y,Yfit,W)

Nis the number of rows oftree.X.Yis anN-element vector representing the observed response.Yfitis anN-element vector representing the predicted responses.Wis anN-element vector representing the observation weights.The output

lossshould be a scalar.

Pass the function handle @ as the

value of the lossfunLossFun name-value argument.

Extended Capabilities

This function fully supports GPU arrays. For more information, see Run MATLAB Functions on a GPU (Parallel Computing Toolbox).

Version History

Introduced in R2011a

See Also

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)