fsrmrmr

Rank features for regression using minimum redundancy maximum relevance (MRMR) algorithm

Since R2022a

Syntax

Description

fsrmrmr ranks features (predictors) using the MRMR algorithm to

identify important predictors for regression problems.

To perform MRMR-based feature ranking for classification, see fscmrmr.

idx = fsrmrmr(Tbl,ResponseVarName)idx, ordered by predictor importance

(from most important to least important). The table Tbl contains the

predictor variables and a response variable, ResponseVarName, which

contains the response values. You can use idx to select important

predictors for regression problems.

idx = fsrmrmr(Tbl,formula)Tbl by using formula. For example,

fsrmrmr(cartable,"MPG ~ Acceleration + Displacement + Horsepower")

ranks the Acceleration, Displacement, and

Horsepower predictors in cartable using the response

variable MPG in cartable.

idx = fsrmrmr(___,Name=Value)

Examples

Simulate 1000 observations from the model .

is a 1000-by-10 matrix of standard normal elements.

e is a vector of random normal errors with mean 0 and standard deviation 0.3.

rng("default") % For reproducibility X = randn(1000,10); Y = X(:,4) + 2*X(:,7) + 0.3*randn(1000,1);

Rank the predictors based on importance.

idx = fsrmrmr(X,Y);

Select the top two most important predictors.

idx(1:2)

ans = 1×2

7 4

The function identifies the seventh and fourth columns of X as the most important predictors of Y.

Load the carbig data set, and create a table containing the different variables. Include the response variable MPG as the last variable in the table.

load carbig cartable = table(Acceleration,Cylinders,Displacement, ... Horsepower,Model_Year,Weight,Origin,MPG);

Rank the predictors based on importance. Specify the response variable.

[idx,scores] = fsrmrmr(cartable,"MPG");Note: If fsrmrmr uses a subset of variables in a table as predictors, then the function indexes the subset of predictors only. The returned indices do not count the variables that the function does not rank (including the response variable).

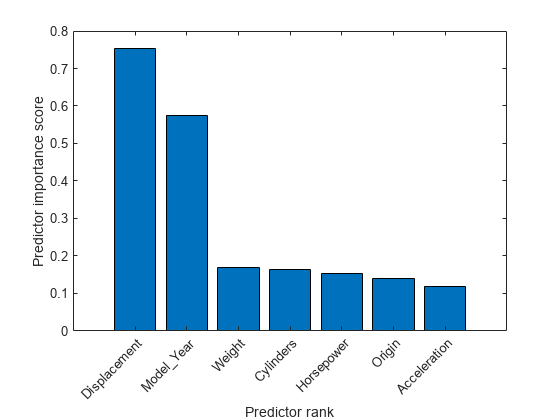

Create a bar plot of the predictor importance scores. Use the predictor names for the x-axis tick labels.

bar(scores(idx)) xlabel("Predictor rank") ylabel("Predictor importance score") predictorNames = cartable.Properties.VariableNames(1:end-1); xticklabels(strrep(predictorNames(idx),"_","\_")) xtickangle(45)

The drop in score between the second and third most important predictors is large, while the drops after the third predictor are relatively small. A drop in the importance score represents the confidence of feature selection. Therefore, the large drop implies that the software is confident of selecting the second most important predictor, given the selection of the most important predictor. The small drops indicate that the differences in predictor importance are not significant.

Select the top two most important predictors.

idx(1:2)

ans = 1×2

3 5

The third column of cartable is the most important predictor of MPG. The fifth column of cartable is the second most important predictor of MPG.

To improve the performance of a regression model, generate new features by using genrfeatures and then select the most important predictors by using fsrmrmr. Compare the test set performance of the model trained using only original features to the performance of the model trained using the most important generated features.

Read power outage data into the workspace as a table. Remove observations with missing values, and display the first few rows of the table.

outages = readtable("outages.csv");

Tbl = rmmissing(outages);

head(Tbl) Region OutageTime Loss Customers RestorationTime Cause

_____________ ________________ ______ __________ ________________ ___________________

{'SouthWest'} 2002-02-01 12:18 458.98 1.8202e+06 2002-02-07 16:50 {'winter storm' }

{'SouthEast'} 2003-02-07 21:15 289.4 1.4294e+05 2003-02-17 08:14 {'winter storm' }

{'West' } 2004-04-06 05:44 434.81 3.4037e+05 2004-04-06 06:10 {'equipment fault'}

{'MidWest' } 2002-03-16 06:18 186.44 2.1275e+05 2002-03-18 23:23 {'severe storm' }

{'West' } 2003-06-18 02:49 0 0 2003-06-18 10:54 {'attack' }

{'NorthEast'} 2003-07-16 16:23 239.93 49434 2003-07-17 01:12 {'fire' }

{'MidWest' } 2004-09-27 11:09 286.72 66104 2004-09-27 16:37 {'equipment fault'}

{'SouthEast'} 2004-09-05 17:48 73.387 36073 2004-09-05 20:46 {'equipment fault'}

Some of the variables, such as OutageTime and RestorationTime, have data types that are not supported by regression model training functions like fitrensemble.

Partition the data set into a training set and a test set by using cvpartition. Use approximately 70% of the observations as training data and the other 30% as test data.

rng("default") % For reproducibility of the data partition c = cvpartition(length(Tbl.Loss),"Holdout",0.30); trainTbl = Tbl(training(c),:); testTbl = Tbl(test(c),:);

Identify and remove outliers of Customers from the training data by using the isoutlier function.

[customersIdx,customersL,customersU] = isoutlier(trainTbl.Customers); trainTbl(customersIdx,:) = [];

Remove the outliers of Customers from the test data by using the same lower and upper thresholds computed on the training data.

testTbl(testTbl.Customers < customersL | testTbl.Customers > customersU,:) = [];

Generate 35 features from the predictors in trainTbl that can be used to train a bagged ensemble. Specify the Loss variable as the response and MRMR as the feature selection method.

[Transformer,newTrainTbl] = genrfeatures(trainTbl,"Loss",35, ... TargetLearner="bag",FeatureSelectionMethod="mrmr");

The returned table newTrainTbl contains various engineered features. The first three columns of newTrainTbl are the original features in trainTbl that can be used to train a regression model using the fitrensemble function, and the last column of newTrainTbl is the response variable Loss.

originalIdx = 1:3; head(newTrainTbl(:,[originalIdx end]))

c(Region) Customers c(Cause) Loss

_________ __________ _______________ ______

SouthEast 1.4294e+05 winter storm 289.4

West 3.4037e+05 equipment fault 434.81

MidWest 2.1275e+05 severe storm 186.44

West 0 attack 0

MidWest 66104 equipment fault 286.72

SouthEast 36073 equipment fault 73.387

SouthEast 1.0698e+05 winter storm 46.918

NorthEast 1.0444e+05 winter storm 255.45

Rank the predictors in newTrainTbl. Specify the response variable.

[idx,scores] = fsrmrmr(newTrainTbl,"Loss");Note: If fsrmrmr uses a subset of variables in a table as predictors, then the function indexes the subset only. The returned indices do not count the variables that the function does not rank (including the response variable).

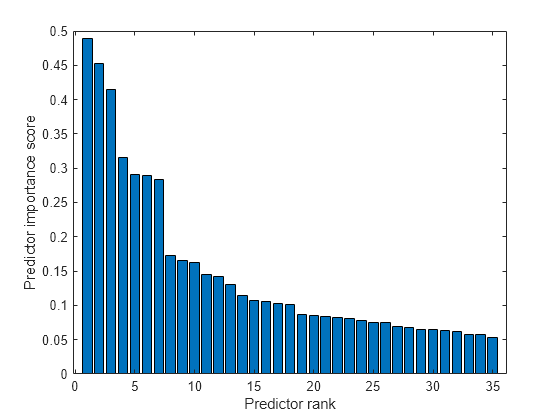

Create a bar plot of the predictor importance scores.

bar(scores(idx)) xlabel("Predictor rank") ylabel("Predictor importance score")

Because there is a large gap between the scores of the seventh and eighth most important predictors, select the seven most important features to train a bagged ensemble model.

importantIdx = idx(1:7); fsMdl = fitrensemble(newTrainTbl(:,importantIdx),newTrainTbl.Loss, ... Method="Bag");

For comparison, train another bagged ensemble model using the three original predictors that can be used for model training.

originalMdl = fitrensemble(newTrainTbl(:,originalIdx),newTrainTbl.Loss, ... Method="Bag");

Transform the test data set.

newTestTbl = transform(Transformer,testTbl);

Compute the test mean squared error (MSE) of the two regression models.

fsMSE = loss(fsMdl,newTestTbl(:,importantIdx), ...

newTestTbl.Loss)fsMSE = 1.0867e+06

originalMSE = loss(originalMdl,newTestTbl(:,originalIdx), ...

newTestTbl.Loss)originalMSE = 1.0961e+06

fsMSE is less than originalMSE, which suggests that the bagged ensemble trained on the most important generated features performs slightly better than the bagged ensemble trained on the original features.

Input Arguments

Sample data, specified as a table. Multicolumn variables and cell arrays other than cell arrays of character vectors are not allowed.

Each row of Tbl corresponds to one observation, and each column corresponds to one predictor variable. Optionally, Tbl can contain additional columns for a response variable and observation weights. The response variable must be a numeric vector.

If

Tblcontains the response variable, and you want to use all remaining variables inTblas predictors, then specify the response variable by usingResponseVarName. IfTblalso contains the observation weights, then you can specify the weights by usingWeights.If

Tblcontains the response variable, and you want to use only a subset of the remaining variables inTblas predictors, then specify the subset of variables by usingformula.If

Tbldoes not contain the response variable, then specify a response variable by usingY. The response variable andTblmust have the same number of rows.

If fsrmrmr uses a subset of variables in Tbl as predictors, then the function indexes the predictors using only the subset. The values in the CategoricalPredictors name-value argument and the output argument idx do not count the predictors that the function does not rank.

If Tbl contains a response variable, then fsrmrmr considers NaN values in the response variable to be missing values. fsrmrmr does not use observations with missing values in the response variable.

Data Types: table

Response variable name, specified as a character vector or string scalar containing the name of a variable in Tbl.

For example, if a response variable is the column Y of

Tbl (Tbl.Y), then specify

ResponseVarName as "Y".

Data Types: char | string

Explanatory model of the response variable and a subset of the predictor variables, specified

as a character vector or string scalar in the form "Y ~ x1 + x2 +

x3". In this form, Y represents the response variable, and

x1, x2, and x3 represent

the predictor variables.

To specify a subset of variables in Tbl as predictors, use a formula. If

you specify a formula, then fsrmrmr does not rank any variables

in Tbl that do not appear in formula.

The variable names in the formula must be both variable names in

Tbl (Tbl.Properties.VariableNames) and valid

MATLAB® identifiers. You can verify the variable names in Tbl

by using the isvarname function. If the variable

names are not valid, then you can convert them by using the matlab.lang.makeValidName function.

Data Types: char | string

Predictor data, specified as a numeric matrix. Each row of X corresponds to one observation, and each column corresponds to one predictor variable.

Data Types: single | double

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Example: fsrmrmr(Tbl,"y",CategoricalPredictors=[1 2 4],Weights="w")

specifies that the y column of Tbl is the response

variable, the w column of Tbl contains the observation

weights, and the first, second, and fourth columns of Tbl (with the

y and w columns removed) are categorical

predictors.

List of categorical predictors, specified as one of the values in this table.

| Value | Description |

|---|---|

| Vector of positive integers |

Each entry in the vector is an index value indicating that the corresponding predictor is

categorical. The index values are between 1 and If |

| Logical vector |

A |

| Character matrix | Each row of the matrix is the name of a predictor variable. The

names must match the names in Tbl. Pad the

names with extra blanks so each row of the character matrix has the

same length. |

| String array or cell array of character vectors | Each element in the array is the name of a predictor variable.

The names must match the names in Tbl. |

"all" | All predictors are categorical. |

By default, if the predictor data is a table

(Tbl), fsrmrmr assumes that a variable is

categorical if it is a logical vector, unordered categorical vector, character array, string

array, or cell array of character vectors. If the predictor data is a matrix

(X), fsrmrmr assumes that all predictors are

continuous. To identify any other predictors as categorical predictors, specify them by using

the CategoricalPredictors name-value argument.

Example: "CategoricalPredictors","all"

Example: CategoricalPredictors=[1 5 6 8]

Data Types: single | double | logical | char | string | cell

Indicator for whether to use missing values in predictors, specified as either

true to use the values for ranking, or false

to discard the values.

fsrmrmr considers NaN,

'' (empty character vector), "" (empty

string), <missing>, and <undefined>

values to be missing values.

If you specify UseMissing as true, then

fsrmrmr uses missing values for ranking. For a categorical

variable, fsrmrmr treats missing values as an extra category.

For a continuous variable, fsrmrmr places

NaN values in a separate bin for binning.

If you specify UseMissing as false, then

fsrmrmr does not use missing values for ranking. Because

fsrmrmr computes mutual information for each pair of

variables, the function does not discard an entire row when values in the row are

partially missing. fsrmrmr uses all pair values that do not

include missing values.

Example: "UseMissing",true

Example: UseMissing=true

Data Types: logical

Verbosity level, specified as a nonnegative integer. The value of

Verbose controls the amount of diagnostic information that the

software displays in the Command Window.

0 —

fsrmrmrdoes not display any diagnostic information.1 —

fsrmrmrdisplays the elapsed times for computing mutual information and ranking predictors.≥ 2 —

fsrmrmrdisplays the elapsed times and more messages related to computing mutual information. The amount of information increases as you increase theVerbosevalue.

Example: Verbose=1

Data Types: single | double

Observation weights, specified as a vector of scalar values or the name of a

variable in Tbl. The function weights the observations in each row

of X or Tbl with the corresponding value in

Weights. The size of Weights must equal the

number of rows in X or Tbl.

If you specify the input data as a table Tbl, then

Weights can be the name of a variable in Tbl

that contains a numeric vector. In this case, you must specify

Weights as a character vector or string scalar. For example, if

the weight vector is the column W of Tbl

(Tbl.W), then specify Weights="W".

fsrmrmr normalizes the weights to add up to one. Inf weights are not supported.

Data Types: single | double | char | string

Output Arguments

Indices of predictors in X or Tbl ordered by

predictor importance, returned as a 1-by-r numeric vector, where

r is the number of ranked predictors.

If Tbl contains the response variable, then the function indexes

the predictors excluding the response variable. For example, suppose

Tbl includes 10 columns and you specify the second column of

Tbl as the response variable. If idx(3) is

5, then the third most important predictor is the sixth column of

Tbl.

If fsrmrmr uses a subset of variables in Tbl as

predictors, then the function indexes the predictors using only the subset. For example,

suppose Tbl includes 10 columns and you specify the last five

columns of Tbl as the predictor variables by using

formula. If idx(3) is 5,

then the third most important predictor is the 10th column in Tbl,

which is the fifth predictor in the subset.

Predictor scores, returned as a 1-by-r numeric vector, where r is the number of ranked predictors.

A large score value indicates that the corresponding predictor is important. Also, a drop in the feature importance score represents the confidence of feature selection. For example, if the software is confident of selecting a feature x, then the score value of the next most important feature is much smaller than the score value of x.

For example, suppose Tbl includes 10 columns and you specify the last five columns of Tbl as the predictor variables by using formula. Then, score(3) contains the score value of the 8th column in Tbl, which is the third predictor in the subset.

More About

The mutual information between two variables measures how much uncertainty of one variable can be reduced by knowing the other variable.

The mutual information I of the discrete random variables X and Z is defined as

If X and Z are independent, then I equals 0. If X and Z are the same random variable, then I equals the entropy of X.

The fsrmrmr function uses this definition to compute the mutual

information values for both categorical (discrete) and continuous variables. For each

continuous variable, including the response, fsrmrmr discretizes the

variable into 256 bins or the number of unique values in the variable if it is less than

256. The function finds optimal bivariate bins for each pair of variables using the adaptive

algorithm [2].

Algorithms

The MRMR algorithm [1] finds an optimal set of features that is mutually and maximally dissimilar and can represent the response variable effectively. The algorithm minimizes the redundancy of a feature set and maximizes the relevance of a feature set to the response variable. The algorithm quantifies the redundancy and relevance using the mutual information of variables—pairwise mutual information of features and mutual information of a feature and the response. You can use this algorithm for regression problems.

The goal of the MRMR algorithm is to find an optimal set S of features that maximizes VS, the relevance of S with respect to a response variable y, and minimizes WS, the redundancy of S, where VS and WS are defined with mutual information I:

|S| is the number of features in S.

Finding an optimal set S requires considering all 2|Ω| combinations, where Ω is the entire feature set. Instead, the MRMR algorithm ranks features through the forward addition scheme, which requires O(|Ω|·|S|) computations, by using the mutual information quotient (MIQ) value.

where Vx and Wx are the relevance and redundancy of a feature, respectively:

The fsrmrmr function ranks all features in Ω and

returns idx (the indices of features ordered by feature importance)

using the MRMR algorithm. Therefore, the computation cost becomes O(|Ω|2). The function quantifies the importance of a feature using a heuristic

algorithm and returns a score (scores). A large score value indicates

that the corresponding predictor is important. Also, a drop in the feature importance score

represents the confidence of feature selection. For example, if the software is confident of

selecting a feature x, then the score value of the next most important

feature is much smaller than the score value of x. You can use the

outputs to find an optimal set S for a given number of features.

fsrmrmr ranks features as follows:

Select the feature with the largest relevance, . Add the selected feature to an empty set S.

Find the features with nonzero relevance and zero redundancy in the complement of S, Sc.

If Sc does not include a feature with nonzero relevance and zero redundancy, go to step 4.

Otherwise, select the feature with the largest relevance, . Add the selected feature to the set S.

Repeat Step 2 until the redundancy is not zero for all features in Sc.

Select the feature that has the largest MIQ value with nonzero relevance and nonzero redundancy in Sc, and add the selected feature to the set S.

Repeat Step 4 until the relevance is zero for all features in Sc.

Add the features with zero relevance to S in random order.

The software can skip any step if it cannot find a feature that satisfies the conditions described in the step.

References

[1] Ding, C., and H. Peng. "Minimum redundancy feature selection from microarray gene expression data." Journal of Bioinformatics and Computational Biology. Vol. 3, Number 2, 2005, pp. 185–205.

[2] Darbellay, G. A., and I. Vajda. "Estimation of the information by an adaptive partitioning of the observation space." IEEE Transactions on Information Theory. Vol. 45, Number 4, 1999, pp. 1315–1321.

Version History

Introduced in R2022a

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)