Verify Local Robustness of ACAS Xu Neural Networks

This example shows how to use formal verification methods to prove local robustness of the ACAS Xu neural networks by using the AI Verification Library for Deep Learning Toolbox™. This example is step 2 in a series of examples that take you through formally verifying a set of neural networks that output steering advisories to aircraft to prevent them from colliding with other aircraft.

To run this example, open Verify and Deploy Airborne Collision Avoidance System (ACAS) Xu Neural Networks and navigate to Step2VerifyLocalRobustnessOfACASXuNeuralNetworksExample.m. This project contains all of the steps for this workflow. You can run the scripts in order or run each one independently.

By default, the example uses a GPU if one is available. Using a GPU requires a Parallel Computing Toolbox™ license and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox). Otherwise, the example uses the CPU.

Verification of Neural Network Robustness

Neural networks can be susceptible to adversarial examples [1]: input data that yields unexpected results despite being similar to or indistinguishable from input data that yields expected results.

You can generate adversarial examples by applying perturbations to examples from the training data such that the model output is incorrect. These perturbations can be small enough to be imperceptible to a human and this behavior can have obvious safety ramifications, both intentionally through a targeted attack and unintentionally through random chance. In MATLAB®, generate adversarial examples using the findAdversarialExamples function.

Finding adversarial examples is a stochastic process. The inability to find an adversarial example does not mean that none exist.

However, it is possible to formally prove the absence of adversarial examples, or robustness, of a neural network. The family of formal method algorithms for neural network verification implemented in AI Verification Library for Deep Learning Toolbox is CROWN [2], which is equivalent to DeepPoly [3]. CROWN and DeepPoly use a mix of interval arithmetic and backpropagation of polyhedral constraints to estimate bounds of the outputs of a network for a given region of input. The computations are different for different layers of a neural network. For some layers, the algorithm finds exact bounds. For other layers, the algorithm finds only upper limits to the bounds. If those upper limits are within the desired maximum output, then the network is robust.

To learn more about formal verification, watch this video: An Introduction to Formal Verification Methods for Neural Networks.

The estimateNetworkOutputBounds function computes the lower and upper bound of the output values that a network returns when the input is between specified input lower and upper bounds.

The verifyNetworkRobustness function checks whether the network classifies all outputs for a given input range as the same class. The function has three possible outputs:

verified— The network is robust to adversarial inputs between the specified bounds.violated— The network is not robust to adversarial inputs between the specified bounds.unproven— The function is unable to prove whether the network is robust to adversarial inputs between the specified bounds.

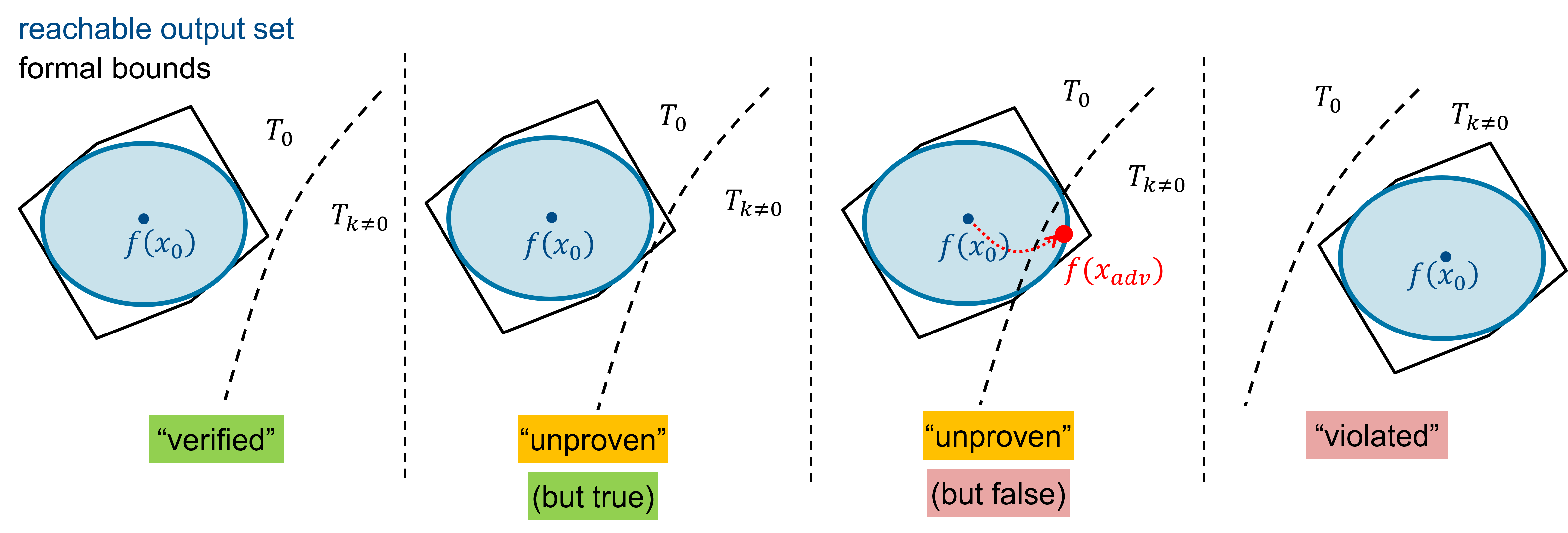

In this figure, the shaded blue represents the true, reachable output set of the network on the input region. The black polyhedral boundary represents the bounds on the reachable output set, returned by the formal verification algorithm. These bounds are not tight, due to overapproximations in the formal verification. The decision boundary separating class with some other class is shown by the dashed curved line. The figure shows these possible results:

"verified" — The output bounds returned by the formal verification algorithm lie strictly within the decision boundary classified as . Therefore, there are no adversarial examples within the input bounds. The

verifyNetworkRobustnessfunction returnsverified."unproven" (but true) — The true reachable output set lies strictly within the decision boundary, but the overapproximation of the output bounds returned by the formal verification algorithm intersects the decision boundary. The

verifyNetworkRobustnessfunction returnsunproven. For more information, seeunproven: Refine Robustness Verification."unproven" (but false) — The true reachable output set intersects the decision boundary, therefore not all inputs within the input range result in the same output class. The

verifyNetworkRobustnessfunction returnsunproven. To learn how to prove that the network is not robust in theunprovencase, seeunproven: Prove Lack of Robustness."violated" — The true reachable output set lies strictly within an incorrect output class. The

verifyNetworkRobustnessfunction returnsviolated. This can happen if, for example, you use incorrect ground truth class labels.

Load ACAS Xu Neural Networks

Load and save the networks. The example prepares the networks as shown in Explore ACAS Xu Neural Networks.

acasXuNetworkFolder = fullfile(matlab.project.rootProject().RootFolder,"."); zipFile = matlab.internal.examples.downloadSupportFile("nnet","data/acas-xu-neural-network-dataset.zip"); filepath = fileparts(zipFile); unzip(zipFile,acasXuNetworkFolder); matFolder = fullfile(matlab.project.rootProject().RootFolder,"acas-xu-neural-network-dataset","networks-mat"); helpers.convertACASXuFromONNXAndSave(matFolder);

Importing ONNX files and converting to MAT format... ......... .......... .......... .......... ...... Import and conversion complete.

verified: Prove Network Robustness

Consider the Left Abeam collision configuration explored in Explore ACAS Xu Neural Networks. Both aircraft are located on the same vertical plane and the previous advisory was Clear-of-Conflict. The orientations and velocities of the two aircraft mean that they will collide after 100 s if neither aircraft changes course.

At around a separation of between 10,000 and 20,000 ft, the corresponding ACAS Xu network advises Weak Right. At = 15,000 ft, a small of amount of uncertainty in the velocities of the two aircraft should not change the advisory. In this scenario, the network should be robust to small perturbations in the velocity.

Load the network corresponding to no vertical separation and previous advisory Clear-of-Conflict.

timeUntilLossOfVerticalSeparation = 1; previousAdvisory = 1; net = helpers.loadACASNetwork(previousAdvisory,timeUntilLossOfVerticalSeparation);

Specify the Left Abeam configuration. Set the distance to = 15,000 ft.

X = [15000 pi/2 -0.4296 954.6 1050];

Add uncertainty of around 3% to the velocities.

dX = 0.03; xLower = X .* [1 1 1 1-dX 1-dX]; xUpper = X .* [1 1 1 1+dX 1+dX];

Convert xLower and xUpper into formatted dlarray objects.

xLower = dlarray(xLower,"BC"); xUpper = dlarray(xUpper,"BC");

Prove that the network advises Weak Right for every possible set of inputs between xLower and xUpper by using the verifyNetworkRobustness function.

label = 3; % Weak Right

verifyNetworkRobustness(net,xLower,xUpper,label)ans = categorical

verified

unproven: Refine Robustness Verification

When you increase the uncertainty, the output bounds calculated by the software can intersect the decision boundary. The network can still be robust in the entire space spanned by xLower and xUpper, but the formal verification cannot prove it.

Increase the uncertainty of the velocities to around 8%.

dX = 0.08; xLower = dlarray(X .* [1 1 1 1-dX 1-dX],"BC"); xUpper = dlarray(X .* [1 1 1 1+dX 1+dX],"BC");

Verify the network robustness.

[results,detailedResults] = verifyNetworkRobustness(net,xLower,xUpper,label); disp(results)

unproven

The function returns unproven. To understand this result in more detail, analyze the second output argument of the verifyNetworkRobustness function.

detailedResults = array2table(detailedResults,VariableNames=helpers.classNames)

detailedResults=1×5 table

unproven verified <undefined> verified unproven

For the classes Weak Left and Strong Left, the function returns verified. This means that the network does not predict either of these classes anywhere within the input bounds. This makes sense, since the expected outcome is for the advisory to tell the aircraft to turn right.

For the class Weak Right, the function returns <undefined>, because Weak Right is the expected label.

For the classes Strong Right and Clear-of-Conflict, the function returns unproven. This suggests that increasing the range of velocities moves the overapproximating region calculated by the formal verification algorithm through the decision boundary between Strong Right and Weak Right, and Clear-of-Conflict and Weak Right.

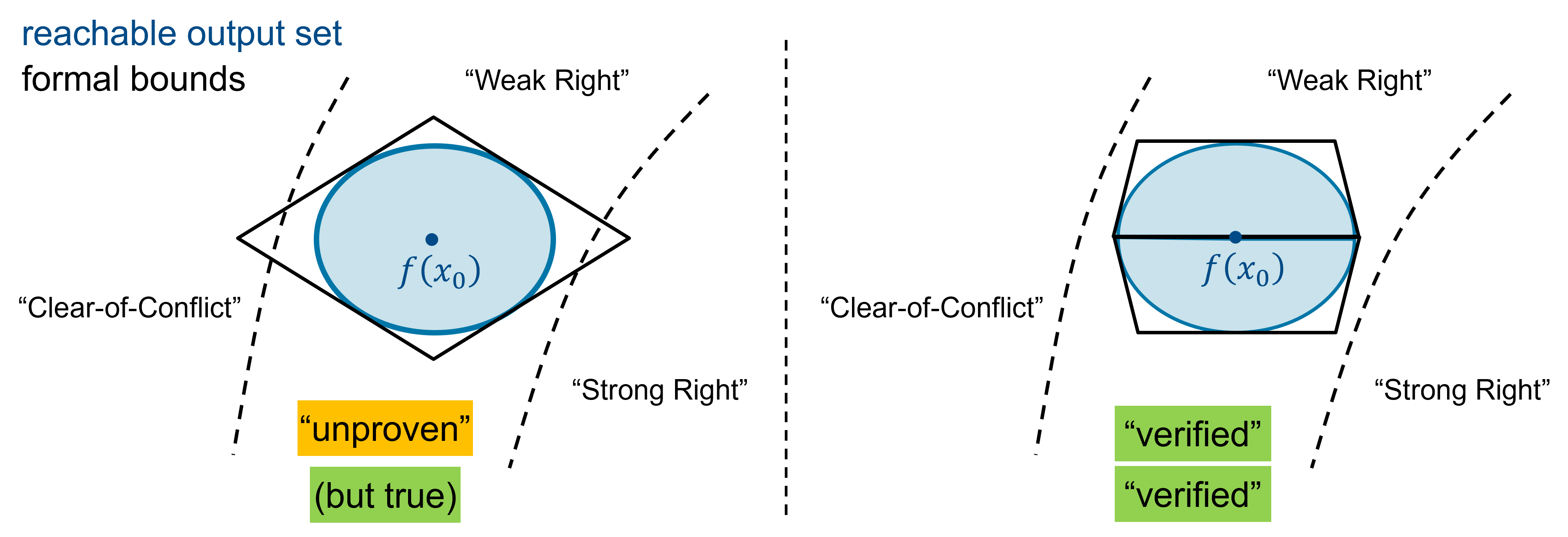

Refine Verification by Partitioning Input Region

To reduce the amount of overapproximation of the output bounds, split the input region spanned by XLower and XUpper into several smaller input regions, then attempt to verify the network robustness on each of the subregions.

The figure shows how bisecting the input region can tighten the output bounds.

Bisect the region along the range of [960 1140] into [960 1050] and [1050 1140].

xLower = repmat(xLower,1,2); xUpper = repmat(xUpper,1,2); xLower(5,2) = 1050; xUpper(5,1) = 1050; label = [3 3];

Verify the robustness of these two subregions.

verifyNetworkRobustness(net,xLower,xUpper,label)

ans = 2×1 categorical array

"verified"

"verified"

The function returns verified for both subregions, which means that the network predicts Weak Right on the entire region.

To learn how to use region bisection to prove robustness on the entire operative design domain, see Verify Global Stability of ACAS Xu Neural Networks and Verify Global Stability of ACAS Xu Neural Network Using Adaptive Mesh.

Refine Verification using -CROWN Algorithm

Another way to tighten the bounds returned by the formal verification algorithm and reduce unproven verification results is to use the -CROWN algorithm [4]. This algorithm fine-tunes the polyhedral constraints that introduce overapproximations on the functions within the network. For example, the algorithm reduces overapproximations coming from some nonlinear or piecewise linear activation functions, such as relu.

The -CROWN algorithm can produce tighter output bounds than the default CROWN algorithm that the verifyNetworkRobustness function uses, but takes longer to run.

Verify the network robustness. To use the -CROWN algorithm, set the Algorithm name-value argument of the verifyNetworkRobustness function to "alpha-crown".

dX = 0.08; xLower = dlarray(X .* [1 1 1 1-dX 1-dX],"BC"); xUpper = dlarray(X .* [1 1 1 1+dX 1+dX],"BC"); label = 3; verifyNetworkRobustness(net,xLower,xUpper,label,Algorithm="alpha-crown")

ans = categorical

verified

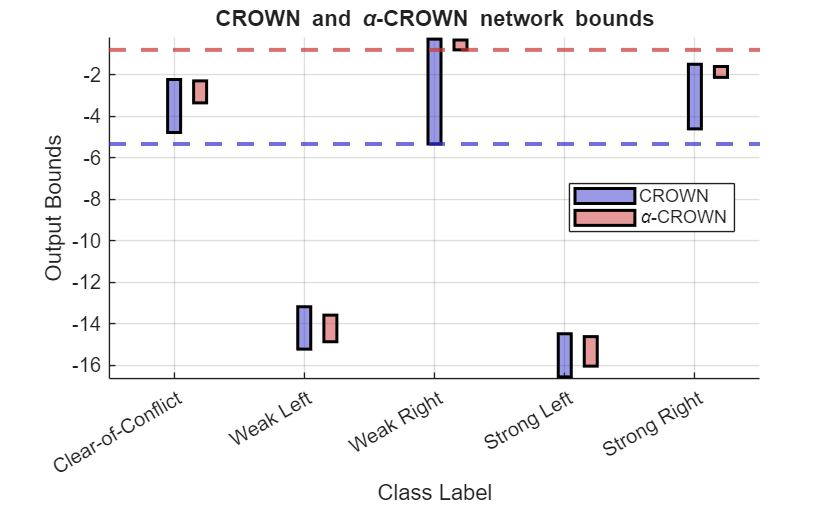

To compare the impact of using either the default CROWN or the -CROWN algorithm on the tightness of the bounds, estimate the network output bounds by using the estimateNetworkOutputBounds function.

[YLowerCROWN,YUpperCROWN] = estimateNetworkOutputBounds(net,xLower,xUpper);

[YLowerAlphaCROWN,YUpperAlphaCROWN] = estimateNetworkOutputBounds(net,xLower,xUpper,Algorithm="alpha-crown");Compare the output bounds for each of the five classes by using the displayBoundComparison function, which is defined at the bottom of this example.

displayBoundComparison(YLowerCROWN,YUpperCROWN,YLowerAlphaCROWN,YUpperAlphaCROWN)

The figure shows that the output ranges associated with the five different classes are all much smaller for the -CROWN algorithm compared to the CROWN algorithm.

For the CROWN algorithm, the bounds of the true Weak Right output overlap with the bounds of the Clear-of-Conflict and Strong Right outputs. This means that the algorithm can not determine that the network only predicts Weak Right. Therefore, the output of the verifyNetworkRobustness function is unproven.

For the -CROWN algorithm, there is no overlap between the output bounds of the true Weak Right output and any of the other classes. This proves that the network only predicts Weak Right for inputs within the input bounds XLower and XUpper. Therefore, the output of the verifyNetworkRobustness function is verified.

unproven: Prove Lack of Robustness

When you increase the uncertainty, the true network output bounds can intersect the decision boundary. The network is no longer robust across the entire input region.

Increase the uncertainty of the velocities to around 25%.

dX = 0.25; xLower = dlarray(X .* [1 1 1 1-dX 1-dX],"BC"); xUpper = dlarray(X .* [1 1 1 1+dX 1+dX],"BC"); label = 3;

Ensure that every input inside the input bounds is valid by clipping the upper bounds of the ownship and intruder velocities to the maximum allowed velocity of 1200 ft/s.

xUpper(4:5) = clip(xUpper(4:5),0,1200);

Verify the network robustness using the -CROWN algorithm. Return detailed results.

[result,detailedResult] = verifyNetworkRobustness(net,xLower,xUpper,label,Algorithm="alpha-crown");

array2table(detailedResult,VariableNames=helpers.classNames)ans=1×5 table

unproven unproven <undefined> unproven unproven

The function returns unproven for all classes.

To prove that the network is not robust within this large input range, find an example input for which the network does not advise Weak Right by using the findAdversarialExamples function. The findAdversarialExamples finds adversarial examples starting from a random guess. Run the function repeatedly, up to 100 times or until it finds an adversarial example.

for ii = 1:100 [xAdversarial,mislabel,iX] = findAdversarialExamples(net,xLower,xUpper,label); if ~isempty(xAdversarial) disp("Adversial example found after " + ii + " attempts.") disp(mat2str(extractdata(xAdversarial)',4)) advisory = helpers.classNames(mislabel) return end end

Adversial example found after 8 attempts.

[1.5e+04 1.571 -0.4296 1169 801.7]

advisory = "Clear-of-Conflict"

To confirm that the returned example is adversarial, calculate the network advisory for the adversarial example input by using the predictAndUpdateState function.

As an example, consider the adversarial example xAdversarial = [15000 1.571 -0.4296 1064 765.4].

xAdversarial = [15000 1.571 -0.4296 1064 765.4]; yAdversarial = predict(net,xAdversarial); scores2label(yAdversarial,helpers.classNames)

ans = categorical

Clear-of-Conflict

In this adversarial example, compared to the Left Abeam scenario, the velocity of the ownship is faster than in the original, whereas the intruder velocity is much slower than the in original.

vOwnDiff = X(4) - xAdversarial(4)

vOwnDiff = -109.4000

vIntDiff = X(5) - xAdversarial(5)

vIntDiff = 284.6000

The network predicts Clear-of-Conflict, which makes sense, because the slow-moving intruder means that the ownship does not have to do anything to avoid collision.

violated: Prove Lack of Robustness

If the true reachable output set lies strictly within an incorrect output class, then the network is not robust. For example, this can happen if you use incorrect ground truth class labels.

In the Left Abeam scenario, at 15,000 ft of horizontal separation, the network advises Weak Right within at least 3% of uncertainty in the velocities. If you attempt to prove that the network advises Strong Right in the entire input range, then true reachable output lies strictly within a class other than Strong Right and the verifyNetworkRobustness function returns violated.

Load the network.

timeUntilLossOfVerticalSeparation = 1; previousAdvisory = 1; net = helpers.loadACASNetwork(timeUntilLossOfVerticalSeparation,previousAdvisory);

Specify the Left Abeam configuration. Set the distance to = 15,000 ft.

X = [15000 pi/2 -0.4296 954.6 1050];

Add uncertainty on the velocities of around 3%.

dX = 0.03; xLower = dlarray(X .* [1 1 1 1-dX 1-dX],"BC"); xUpper = dlarray(X .* [1 1 1 1+dX 1+dX],"BC");

Verify the network robustness, but specify an incorrect class label.

label = 5; % Strong Right

verifyNetworkRobustness(net,xLower,xUpper,label)ans = categorical

violated

Supporting Functions

The displayBoundComparison function plots the output bounds returned by the estimateNetworkOutputBounds function using the two different available algorithms, CROWN and -CROWN.

function displayBoundComparison(ylCrown,yuCrown,ylAlphaCrown,yuAlphaCrown) yl1 = extractdata(ylCrown); yu1 = extractdata(yuCrown); yl2 = extractdata(ylAlphaCrown); yu2 = extractdata(yuAlphaCrown); x = 1:5; bar_width = 0.1; offset = 0.2; figure hold on h1 = patch([-10 -9.7 -9.7 -10],[-10 -10 -9 -9],[0.2 0.2 0.8], ... EdgeColor="k",FaceAlpha=0.5,LineWidth=1.5); h2 = patch([-10 -9.7 -9.7 -10], [-12 -12 -11 -11], [0.8 0.2 0.2], ... EdgeColor="k",FaceAlpha=0.5,LineWidth=1.5); for i = 1:5 rectangle(Position=[x(i)-bar_width/2,yl1(i),bar_width,yu1(i)-yl1(i)], ... FaceColor=[0.2 0.2 0.8 0.5],FaceAlpha=0.5,EdgeColor="k",LineWidth=1.5) rectangle(Position=[x(i)+offset-bar_width/2,yl2(i),bar_width,yu2(i)-yl2(i)], ... FaceColor=[0.8 0.2 0.2 0.5],FaceAlpha=0.5,EdgeColor="k",LineWidth=1.5) end yline(yl1(3),'--',Color=[0.2 0.2 0.8],LineWidth=2) yline(yl2(3),'--',Color=[0.8 0.2 0.2],LineWidth=2) grid on xlim([0.5 5.5]) ylim([min([yl1;yl2])-0.1,max([yu1;yu2])+0.1]) xlabel("Class Label") ylabel("Output Bounds") title("CROWN and \alpha-CROWN network bounds",Interpreter="tex") xticks(x) xticklabels(helpers.classNames) legend([h1 h2],["CROWN","\alpha-CROWN"],Location="best") end

References

[1] Goodfellow, Ian J., Jonathon Shlens, and Christian Szegedy. “Explaining and Harnessing Adversarial Examples.” Preprint, submitted March 20, 2015. https://doi.org/10.48550/arXiv.1412.6572.

[2] Zhang, Huan, et al. “Efficient Neural Network Robustness Certification with General Activation Functions.” arXiv:1811.00866, arXiv, 2 Nov. 2018. arXiv.org, https://doi.org/10.48550/arXiv.1811.00866.

[3] Singh, Gagandeep, Timon Gehr, Markus Püschel, and Martin Vechev. “An Abstract Domain for Certifying Neural Networks”. Proceedings of the ACM on Programming Languages 3, no. POPL (January 2, 2019): 1–30. https://doi.org/10.1145/3290354.

[4] Xu, Kaidi, et al. “Fast and Complete: Enabling Complete Neural Network Verification with Rapid and Massively Parallel Incomplete Verifiers.” arXiv:2011.13824, arXiv, 16 Mar. 2021. arXiv.org, https://doi.org/10.48550/arXiv.2011.13824.

See Also

verifyNetworkRobustness | estimateNetworkOutputBounds | findAdversarialExamples