Deep Learning for Signal Processing

Deep learning for signal processing is increasingly being incorporated into applications such as voice assistants, digital health, and wireless communications.

In this video, you will learn how you can leverage techniques, such as time-frequency transformations and wavelet scattering networks, and use them in conjunction with convolutional neural networks and recurrent neural networks to build predictive models on signals.

You will also see how MATLAB® can help you with the four steps typically involved in building such applications:

Accessing and managing signal data from a variety of hardware devices

Performing deep learning on signals through time-frequency representations or deep networks

Training deep networks on single or multiple NVIDIA® GPUs on local machines or cloud-based systems

Generating optimized CUDA® code for your signal preprocessing algorithms and deep networks

Published: 27 Sep 2019

Deep learning continues to gain popularity in signal processing with applications like voice assistants, digital health, radar and wireless communications. With MATLAB, you can easily develop deep learning models and build real-world smart signal processing systems. Let’s take a closer look at the four steps involved.

The first step in building a deep learning model is to access and manage your data. Using MATLAB, you can acquire signals from hardware devices from a variety of sources.

You can also generate synthetic signal data via simulation or use data augmentation techniques if you don’t have enough data to begin with.

MATLAB simplifies the process of accessing and working with signal data that is either too large to fit in memory or if you have large collections of signal data.

Once the data is collected and ready, it’s now time to interpret the signal data and label it. You can quickly visualize and analyze your signals using the Signal Analyzer app as a starting point.

You can label signals with attributes, regions, and points of interest, and use domain-specific tools to label audio signals to prepare your data for training.

Moving on to the next step.

There are two approaches for performing deep learning on signals.

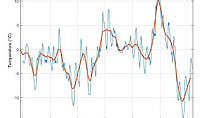

The first approach involves converting signals into time-frequency representations and training custom convolutional neural networks to extract patterns directly from those representations. A time-frequency representation describes how spectral components in signals evolve as a function of time.

This approach enhances the patterns that may not be visible in the original signal.

There are a variety of techniques for generating time-frequency representations from signals and saving it as images, including spectrograms, continuous wavelet transforms or scalograms, and constant-Q transforms.

The second approach involves feeding signals directly into deep networks such as LSTM networks. To make the deep network learn the patterns more quickly, you may need to reduce the signal dimensionality and variability. To do this, you have two options in MATLAB:

You can manually identify and extract features from signals, or

You can automatically extract features using invariant scattering convolutional networks which provide low-variance representations without losing critical information

Once you select the right approach for your signals, the next step is to train the deep networks which can be computationally intensive and take anywhere from hours to days. To help speed this up, MATLAB supports training on single or multiple NVIDIA GPUs on local machines or cloud-based systems. You can also visualize the training process to get a sense of the progress long before it finishes.

Finally, you can automatically generate optimized CUDA code for your signal preprocessing algorithms and deep networks to perform inference on embedded GPUs.

To learn more about our deep learning capabilities, check out mathworks.com. We have a large collection of examples to help get you started with using deep learning for signals.