plot

Description

plot( creates a bar graph of the

accuracy (for classification models) or mean squared error (for regression models) for the

data slices in sliceResults)sliceResults. For most metrics,

plot displays bars for the data slices and their complements.

The complement of a data slice consists of all observations that are not in the data

slice.

plot(

specifies the slice metric to display in the bar graph.sliceResults,Metric=metric)

b = plot(___)Bar objects using any of the input argument combinations in previous

syntaxes. Use b to query or modify Bar Properties after displaying the bar graph.

Examples

Train a binary classifier on numeric data. Use sliceMetrics to slice the training data according to one of the predictors. Evaluate the accuracy of the model predictions on the data slices.

Load the sample file fisheriris.csv, which contains iris data including sepal length, sepal width, petal width, and species type. Read the file into a table, and then convert the Species variable into a categorical variable. Display the first eight observations in the table.

fisheriris = readtable("fisheriris.csv");

fisheriris.Species = categorical(fisheriris.Species);

head(fisheriris) SepalLength SepalWidth PetalLength PetalWidth Species

___________ __________ ___________ __________ _______

5.1 3.5 1.4 0.2 setosa

4.9 3 1.4 0.2 setosa

4.7 3.2 1.3 0.2 setosa

4.6 3.1 1.5 0.2 setosa

5 3.6 1.4 0.2 setosa

5.4 3.9 1.7 0.4 setosa

4.6 3.4 1.4 0.3 setosa

5 3.4 1.5 0.2 setosa

Separate the data for two of the iris species: versicolor and virginica.

versicolorData = fisheriris(fisheriris.Species=="versicolor",:); virginicaData = fisheriris(fisheriris.Species=="virginica",:); trainingData = [versicolorData;virginicaData];

Train a binary tree classifier on the versicolor and virginica data.

Mdl = fitctree(trainingData,"Species")Mdl =

ClassificationTree

PredictorNames: {'SepalLength' 'SepalWidth' 'PetalLength' 'PetalWidth'}

ResponseName: 'Species'

CategoricalPredictors: []

ClassNames: [versicolor virginica]

ScoreTransform: 'none'

NumObservations: 100

Properties, Methods

Mdl is a ClassificationTree model object trained on 100 observations.

Compute metrics on the training data slices determined by petal length. Because PetalLength is a numeric predictor, sliceMetrics creates data slices by binning the petal length values of observations in Mdl.X. Data slices always partition the data.

sliceResults = sliceMetrics(Mdl,"PetalLength")sliceResults =

sliceMetrics evaluated on PetalLength slices:

PetalLength NumObservations Accuracy OddsRatio PValue EffectSize

___________ _______________ ________ _________ ________ __________

[3, 3.65) 6 1 0 0.083246 -0.031915

[3.65, 4.3) 17 1 0 0.083241 -0.036145

[4.3, 4.95) 31 0.96774 1.1167 0.93193 0.0032726

[4.95, 5.6) 21 0.90476 8.2105 0.23003 0.08258

[5.6, 6.25) 19 1 0 0.08324 -0.037037

[6.25, 6.9] 6 1 0 0.083246 -0.031915

Properties, Methods

In this example, sliceMetrics creates six data slices. The accuracy for each data slice is quite high (over 90%).

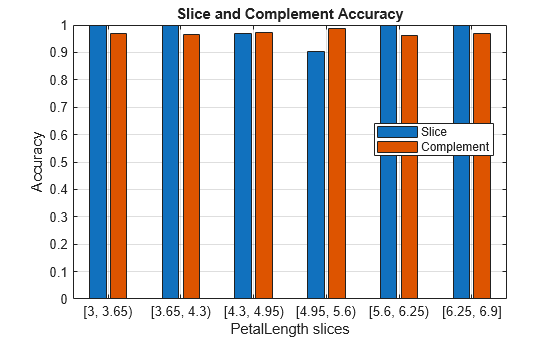

Visualize the accuracy for each slice and its complement in Mdl.X.

plot(sliceResults)

In general, the accuracy for each slice is similar to the accuracy for its slice complement. However, the observations with petal lengths in the range [4.95,5.6) have a slightly lower percentage of correct classifications than all other observations.

Train a regression model on a mix of numeric and categorical data. Use sliceMetrics to slice the test data according to two predictors. Compute the mean squared error for the data slices. To improve the general model performance across the data slices, generate synthetic observations and use them to retrain the model.

Load the carbig data set, which contains measurements of cars made in the 1970s and early 1980s. Bin the Model_Year data to form a categorical variable, and combine the variable with a subset of the other measurements into a table. Remove observations with missing values from the table. Then, display the first eight observations in the table.

load carbig ModelDecade = discretize(Model_Year,[70 80 89], ... "categorical",["70s","80s"]); ModelDecade = categorical(ModelDecade,Ordinal=false); cars = table(Acceleration,Displacement,Horsepower, ... ModelDecade,Weight,MPG); cars = rmmissing(cars); head(cars)

Acceleration Displacement Horsepower ModelDecade Weight MPG

____________ ____________ __________ ___________ ______ ___

12 307 130 70s 3504 18

11.5 350 165 70s 3693 15

11 318 150 70s 3436 18

12 304 150 70s 3433 16

10.5 302 140 70s 3449 17

10 429 198 70s 4341 15

9 454 220 70s 4354 14

8.5 440 215 70s 4312 14

Partition the data into training data and test data. Reserve approximately 50% of the observations for computing slice metrics, and use the rest of the observations for model training.

rng(0,"twister") % For reproducibility of partition cv = cvpartition(length(cars.MPG),Holdout=0.5); trainingCars = cars(training(cv),:); testCars = cars(test(cv),:);

Train a regression tree model using the training data. Then, compute metrics on the test data slices determined by the decade of manufacture and the weight of the car. Partition the numeric Weight values into three bins. Because ModelDecade is a categorical variable with two categories, sliceMetrics creates six data slices.

Mdl = fitrtree(trainingCars,"MPG"); sliceResults = sliceMetrics(Mdl,testCars,["ModelDecade","Weight"], ... NumBins=3)

sliceResults =

sliceMetrics evaluated on ModelDecade and Weight slices:

ModelDecade Weight NumObservations Error TStatistic PValue EffectSize

___________ ________________ _______________ ______ __________ __________ __________

70s [1649, 2812.7) 64 18.392 0.23812 0.81224 1.3097

70s [2812.7, 3976.3) 53 6.6804 -4.3456 2.3745e-05 -14.843

70s [3976.3, 5140] 36 5.6089 -4.7484 4.0884e-06 -14.578

80s [1649, 2812.7) 32 30.874 2.2891 0.02727 15.972

80s [2812.7, 3976.3) 11 64.626 2.4672 0.032784 49.918

80s [3976.3, 5140] 0 NaN NaN NaN NaN

Properties, Methods

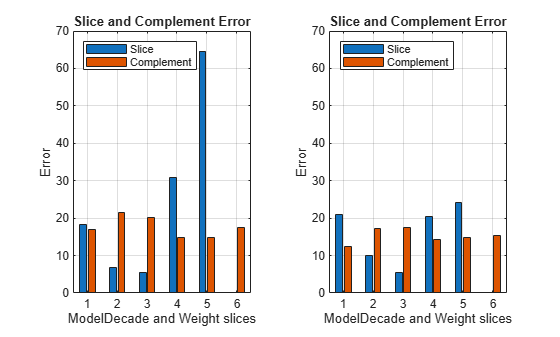

For the cars made in the 80s with a weight in the range [2812.7,3976.3) (that is, the observations in slice 5), the mean squared error (MSE) is much higher than the MSE for the cars in the other data slices.

Generate 500 synthetic observations using the synthesizeTabularData function. By default, the function uses a binning technique to learn the distribution of the variables in trainingCars before synthesizing the data.

rng(10,"twister") % For reproducibility of data generation syntheticCars = synthesizeTabularData(trainingCars,500);

Combine the training observations with the synthetic observations. Use the combined data to retrain the regression tree model.

newTrainingCars = [trainingCars;syntheticCars];

newMdl = fitrtree(newTrainingCars,"MPG");Compute metrics on the same test data slices using the retrained model.

newSliceResults = sliceMetrics(newMdl,testCars,["ModelDecade","Weight"], ... NumBins=3)

newSliceResults =

sliceMetrics evaluated on ModelDecade and Weight slices:

ModelDecade Weight NumObservations Error TStatistic PValue EffectSize

___________ ________________ _______________ ______ __________ __________ __________

70s [1649, 2812.7) 64 20.986 1.5012 0.13736 8.5476

70s [2812.7, 3976.3) 53 9.9331 -2.0166 0.045209 -7.2595

70s [3976.3, 5140] 36 5.4484 -4.3062 2.6575e-05 -11.982

80s [1649, 2812.7) 32 20.422 1.0664 0.29206 6.206

80s [2812.7, 3976.3) 11 24.162 1.0643 0.30927 9.463

80s [3976.3, 5140] 0 NaN NaN NaN NaN

Properties, Methods

Visually compare the MSE values for the data slices.

tiledlayout(1,2) nexttile plot(sliceResults) ylim([0 70]) xticklabels(1:6) legend(Location="northwest") nexttile plot(newSliceResults) ylim([0 70]) xticklabels(1:6) legend(Location="northwest")

The MSE for slice 5 is lower for the model trained with the training and synthetic observations (newMdl) than for the model trained with only the training data (Mdl).

Input Arguments

Slice metrics results, specified as a sliceMetrics

object.

Metric to plot, specified as a character vector or string scalar containing one

metric name. The following tables describe the supported metrics. The default is

"accuracy" for classification models and "error"

for regression models.

Classification Metrics

| Value | Description |

|---|---|

"TruePositives" or "tp" | Number of true positives (TP) |

"FalseNegatives" or "fn" | Number of false negatives (FN) |

"FalsePositives" or "fp" | Number of false positives (FP) |

"TrueNegatives" or "tn" | Number of true negatives (TN) |

"SumOfTrueAndFalsePositives" or "tp+fp" | Sum of TP and FP |

"RateOfPositivePredictions" or "rpp" | Rate of positive predictions (RPP), (TP+FP)/(TP+FN+FP+TN) |

"RateOfNegativePredictions" or "rnp" | Rate of negative predictions (RNP), (TN+FN)/(TP+FN+FP+TN) |

"FalseNegativeRate", "fnr", or "miss" | False negative rate (FNR), or miss rate, FN/(TP+FN) |

"TrueNegativeRate", "tnr", or "spec" | True negative rate (TNR), or specificity, TN/(TN+FP) |

"PositivePredictiveValue", "ppv", "prec", or "precision" | Positive predictive value (PPV), or precision, TP/(TP+FP) |

"NegativePredictiveValue" or "npv" | Negative predictive value (NPV), TN/(TN+FN) |

"Accuracy", "accu", or

"accuracy" | Accuracy, (TP+TN)/(TP+FN+FP+TN) |

"F1Score" or "f1score" | F1 score, 2*TP/(2*TP+FP+FN) |

"OddsRatio" or "oddsratio" | Odds ratio, which is

|

"PValue" or "pvalue" | p-value for the test of the null hypothesis that there is

no association between slice membership and error rate (odds ratio = 1), against

the alternative hypothesis that there is an association (odds ratio ≠ 1). The

software uses Fisher's exact test for small counts (see fishertest) and the Chi-squared

test otherwise. |

"EffectSize" or "effect" | Mean-difference effect size for the test of the null hypothesis that there is

no association between slice membership and error rate (odds ratio = 1), against

the alternative hypothesis that there is an association (odds ratio ≠ 1) (see

meanEffectSize) |

"TStatistic" or "tstat" | t-statistic for Welch's t-test of the

slice error rate against the slice complement error rate (see ttest2) |

Regression Metrics

| Value | Description |

|---|---|

"Error" or "error" | Mean squared error (MSE) |

"TStatistic" or "tstat" | t-statistic for Welch's t-test of the

slice error against the slice complement error (see ttest2) |

"PValue" or "pvalue" | p-value for Welch's t-test of the slice

error against the slice complement error (see ttest2) |

"EffectSize" or "effect" | Mean-difference effect size for the slice error against the slice complement error (see

meanEffectSize) |

For most metrics, plot displays bars for the data slices

and their complements. For metrics that directly compare slices to their complements,

such as "oddsratio", "tstat",

"pvalue", and "effect", the plot displays bars

for the data slices only.

Data Types: char | string

References

[1] Chung, Yeounoh, Tim Kraska, Neoklis Polyzotis, Ki Hyun Tae, and Steven Euijong Whang. “Automated Data Slicing for Model Validation: A Big Data - AI Integration Approach.” IEEE Transactions on Knowledge and Data Engineering 32, no. 12 (2020): 2284–96.

Version History

Introduced in R2026a

See Also

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

Americas

- América Latina (Español)

- Canada (English)

- United States (English)

Europe

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)