Use PageRank Algorithm to Rank Websites

This example shows how to use a PageRank algorithm to rank a collection of websites. Although the PageRank algorithm was originally designed to rank search engine results, it also can be more broadly applied to the nodes in many different types of graphs. The PageRank score gives an idea of the relative importance of each graph node based on how it is connected to the other nodes.

Theoretically, the PageRank score is the limiting probability that someone randomly clicking links on each website will arrive at any particular page. So pages with a high score are highly connected and discoverable within the network, and it is more likely a random web surfer will visit that page.

Algorithm Description

At each step in the PageRank algorithm, the score of each page is updated according to,

r = (1-P)/n + P*(A'*(r./d) + s/n);

ris a vector of PageRank scores.Pis a scalar damping factor (usually 0.85), which is the probability that a random surfer clicks on a link on the current page, instead of continuing on another random page.A'is the transpose of the adjacency matrix of the graph.dis a vector containing the out-degree of each node in the graph.dis set to1for nodes with no outgoing edges.nis the scalar number of nodes in the graph.sis the scalar sum of the PageRank scores for pages with no links.

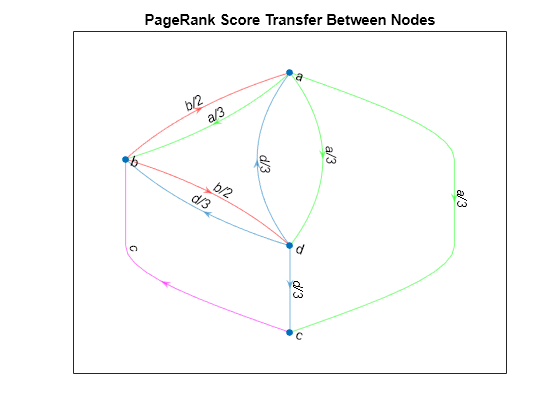

In other words, the rank of each page is largely based on the ranks of the pages that link to it. The term A'*(r./d) picks out the scores of the source nodes that link to each node in the graph, and the scores are normalized by the total number of outbound links of those source nodes. This ensures that the sum of the PageRank scores is always 1. For example, if node 2 links to nodes 1, 3, and 4, then it transfers 1/3 of its PageRank score to each of those nodes during each iteration of the algorithm.

Create a graph that illustrates how each node confers its PageRank score to the other nodes in the graph.

s = {'a' 'a' 'a' 'b' 'b' 'c' 'd' 'd' 'd'};

t = {'b' 'c' 'd' 'd' 'a' 'b' 'c' 'a' 'b'};

G = digraph(s,t);

labels = {'a/3' 'a/3' 'a/3' 'b/2' 'b/2' 'c' 'd/3' 'd/3' 'd/3'};

p = plot(G,'Layout','layered','EdgeLabel',labels);

highlight(p,[1 1 1],[2 3 4],'EdgeColor','g')

highlight(p,[2 2],[1 4],'EdgeColor','r')

highlight(p,3,2,'EdgeColor','m')

title('PageRank Score Transfer Between Nodes')

The centrality function contains an option for calculating PageRank scores.

PageRank with 6 Nodes

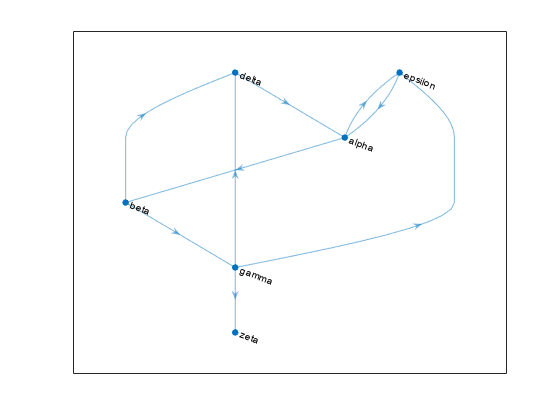

Create and plot a directed graph containing six nodes representing fictitious websites.

s = [1 1 2 2 3 3 3 4 5];

t = [2 5 3 4 4 5 6 1 1];

names = {'http://www.example.com/alpha', 'http://www.example.com/beta', ...

'http://www.example.com/gamma', 'http://www.example.com/delta', ...

'http://www.example.com/epsilon', 'http://www.example.com/zeta'};

G = digraph(s,t,[],names)G =

digraph with properties:

Edges: [9×1 table]

Nodes: [6×1 table]

plot(G,'Layout','layered', ... 'NodeLabel',{'alpha','beta','gamma','delta','epsilon','zeta'})

Calculate the PageRank centrality score for this graph. Use a follow probability (otherwise known as a damping factor) of 0.85.

pr = centrality(G,'pagerank','FollowProbability',0.85)

pr = 6×1

0.3210

0.1706

0.1066

0.1368

0.2008

0.0643

View the PageRank scores and degree information for each page.

G.Nodes.PageRank = pr; G.Nodes.InDegree = indegree(G); G.Nodes.OutDegree = outdegree(G); G.Nodes

ans=6×4 table

Name PageRank InDegree OutDegree

__________________________________ ________ ________ _________

{'http://www.example.com/alpha' } 0.32098 2 2

{'http://www.example.com/beta' } 0.17057 1 2

{'http://www.example.com/gamma' } 0.10657 1 3

{'http://www.example.com/delta' } 0.13678 2 1

{'http://www.example.com/epsilon'} 0.20078 2 1

{'http://www.example.com/zeta' } 0.06432 1 0

The results show that it is not just the number of page links that determines the score, but also the quality. The alpha and gamma websites both have a total degree of 4, however alpha links to both epsilon and beta, which also are highly ranked. gamma is only linked to by one page, beta, which is in the middle of the list. Thus, alpha is scored higher than gamma by the algorithm.

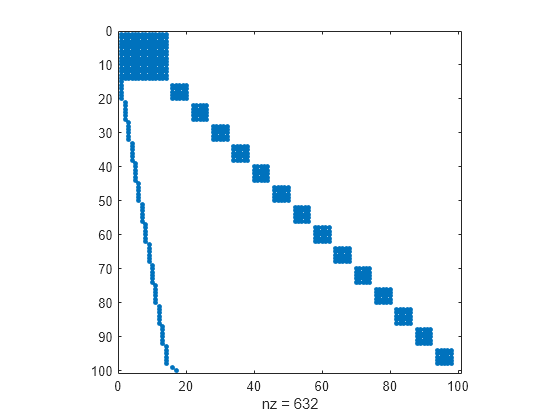

PageRank of Websites on mathworks.com

Load the data in mathworks100.mat and view the adjacency matrix, A. This data was generated in 2015 using an automatic page crawler. The page crawler began at https://www.mathworks.com and followed links to subsequent web pages until the adjacency matrix contained information on the connections of 100 unique web pages.

load mathworks100.mat

spy(A)

Create a directed graph with the sparse adjacency matrix, A, using the URLs contained in U as node names.

G = digraph(A,U)

G =

digraph with properties:

Edges: [632×1 table]

Nodes: [100×1 table]

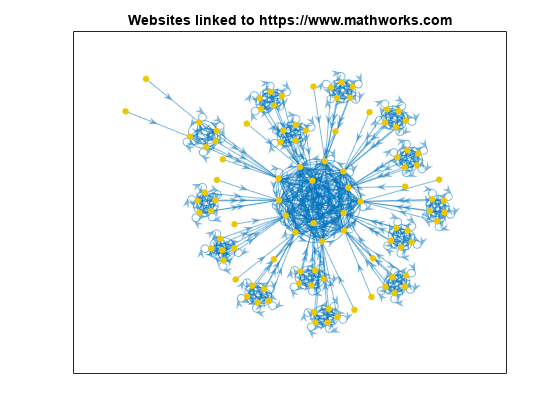

Plot the graph using the force layout.

plot(G,'NodeLabel',{},'NodeColor',[0.93 0.78 0],'Layout','force'); title('Websites linked to https://www.mathworks.com')

Compute the PageRank scores for the graph, G, using 200 iterations and a damping factor of 0.85. Add the scores and degree information to the nodes table of the graph.

pr = centrality(G,'pagerank','MaxIterations',200,'FollowProbability',0.85); G.Nodes.PageRank = pr; G.Nodes.InDegree = indegree(G); G.Nodes.OutDegree = outdegree(G);

View the top 25 resulting scores.

G.Nodes(1:25,:)

ans=25×4 table

Name PageRank InDegree OutDegree

______________________________________________________________________________ ________ ________ _________

{'https://www.mathworks.com' } 0.044342 20 14

{'https://ch.mathworks.com' } 0.043085 20 14

{'https://cn.mathworks.com' } 0.043085 20 14

{'https://jp.mathworks.com' } 0.043085 20 14

{'https://kr.mathworks.com' } 0.043085 20 14

{'https://uk.mathworks.com' } 0.043085 20 14

{'https://au.mathworks.com' } 0.043085 20 14

{'https://de.mathworks.com' } 0.043085 20 14

{'https://es.mathworks.com' } 0.043085 20 14

{'https://fr.mathworks.com' } 0.043085 20 14

{'https://in.mathworks.com' } 0.043085 20 14

{'https://it.mathworks.com' } 0.043085 20 14

{'https://nl.mathworks.com' } 0.043085 20 14

{'https://se.mathworks.com' } 0.043085 20 14

{'https://www.mathworks.com/index.html%3Fnocookie%3Dtrue' } 0.0015 0 1

{'https://www.mathworks.com/company/aboutus/policies_statements/patents.html'} 0.007714 6 6

⋮

Extract and plot a subgraph containing all nodes whose score is greater than 0.005. Color the graph nodes based on their PageRank score.

H = subgraph(G,find(G.Nodes.PageRank > 0.005)); plot(H,'NodeLabel',{},'NodeCData',H.Nodes.PageRank,'Layout','force'); title('Websites linked to https://www.mathworks.com') colorbar

The PageRank scores for the top websites are all quite similar, such that a random web surfer has about a 4.5% chance to land on each page. This small group of highly connected pages forms a clique in the center of the plot. Connected to this central clique are several smaller cliques, which are highly connected amongst themselves.

References

Moler, C. Experiments with MATLAB. Chapter 7: Google PageRank. MathWorks, Inc., 2011.

See Also

digraph | graph | centrality