Systems of Linear Equations

Computational Considerations

One of the most important problems in technical computing is the solution of systems of simultaneous linear equations.

In matrix notation, the general problem takes the following form: Given two matrices A and b, does there exist a unique matrix x, so that Ax= b or xA= b?

It is instructive to consider a 1-by-1 example. For example, does the equation

7x = 21

have a unique solution?

The answer, of course, is yes. The equation has the unique solution x = 3. The solution is easily obtained by division:

x = 21/7 = 3.

The solution is not ordinarily obtained by computing the inverse of 7, that is 7–1= 0.142857..., and then multiplying 7–1 by 21. This would be more work and, if 7–1 is represented to a finite number of digits, less accurate. Similar considerations apply to sets of linear equations with more than one unknown; MATLAB® solves such equations without computing the inverse of the matrix.

Although it is not standard mathematical notation, MATLAB uses the division terminology familiar in the scalar case to describe the solution of a general system of simultaneous equations. The two division symbols, slash, /, and backslash, \, correspond to the two MATLAB functions mrdivide and mldivide. These operators are used for the two situations where the unknown matrix appears on the left or right of the coefficient matrix:

| Denotes the solution to the matrix equation xA = b, obtained using |

| Denotes the solution to the matrix equation Ax = b, obtained using |

Think of “dividing” both sides of the equation Ax = b or xA = b by A. The coefficient matrix A is always in the “denominator.”

The dimension compatibility conditions for x = A\b require the two matrices A and b to have the same number of rows. The solution x then has the same number of columns as b and its row dimension is equal to the column dimension of A. For x = b/A, the roles of rows and columns are interchanged.

In practice, linear equations of the form Ax = b occur more frequently than those of the form xA = b. Consequently, the backslash is used far more frequently than the slash. The remainder of this section concentrates on the backslash operator; the corresponding properties of the slash operator can be inferred from the identity:

(b/A)' = (A'\b').

The coefficient matrix A need not be square. If A has size m-by-n, then there are three cases:

m = n | Square system. Seek an exact solution. |

m > n | Overdetermined system, with more equations than unknowns. Find a least-squares solution. |

m < n | Underdetermined system, with fewer equations than unknowns. Find a basic solution with at most m nonzero components. |

The mldivide Algorithm

The mldivide operator employs different solvers to handle different kinds of coefficient matrices. The various cases are diagnosed automatically by examining the coefficient matrix. For more information, see the “Algorithms” section of the mldivide reference page.

General Solution

The general solution to a system of linear equations Ax= b describes all possible solutions. You can find the general solution by:

Solving the corresponding homogeneous system Ax = 0. Do this using the

nullcommand, by typingnull(A). This returns a basis for the solution space to Ax = 0. Any solution is a linear combination of basis vectors.Finding a particular solution to the nonhomogeneous system Ax =b.

You can then write any solution to Ax= b as the sum of the particular solution to Ax =b, from step 2, plus a linear combination of the basis vectors from step 1.

The rest of this section describes how to use MATLAB to find a particular solution to Ax =b, as in step 2.

Square Systems

The most common situation involves a square coefficient matrix A and a single right-hand side column vector b.

Nonsingular Coefficient Matrix

If the matrix A is nonsingular, then the solution, x = A\b, is the same size as b. For example:

A = pascal(3);

u = [3; 1; 4];

x = A\u

x =

10

-12

5It can be confirmed that A*x is exactly equal to u.

If A and b are square and the same size, x= A\b is also that size:

b = magic(3);

X = A\b

X =

19 -3 -1

-17 4 13

6 0 -6It can be confirmed that A*x is exactly equal to b.

Both of these examples have exact, integer solutions. This is because the coefficient matrix was chosen to be pascal(3), which is a full rank matrix (nonsingular).

Singular Coefficient Matrix

A square matrix A is singular if it does not have linearly

independent columns. If A is singular, the solution to

Ax = b either does not exist, or is not unique. The

backslash operator, A\b, issues a warning if A is

nearly singular or if it detects exact singularity.

If A is singular and Ax = b has a solution, you can find a particular solution that is not unique, by typing

P = pinv(A)*b

pinv(A) is a pseudoinverse of A. If

Ax = b does not have an exact solution, then

pinv(A) returns a least-squares solution.

For example:

A = [ 1 3 7

-1 4 4

1 10 18 ]is singular, as you can verify by typing

rank(A)

ans =

2Since A is not full rank, it has some singular values equal to zero.

Exact Solutions. For b =[5;2;12],

the equation Ax = b has an exact solution, given

by

pinv(A)*b

ans =

0.3850

-0.1103

0.7066Verify that pinv(A)*b is an exact solution by typing

A*pinv(A)*b

ans =

5.0000

2.0000

12.0000Least-Squares Solutions. However, if b =

[3;6;0], Ax = b does not have an exact

solution. In this case, pinv(A)*b returns a least-squares solution. If

you type

A*pinv(A)*b

ans =

-1.0000

4.0000

2.0000you do not get back the original vector b.

You can determine whether Ax =b has an exact

solution by finding the row reduced echelon form of the augmented matrix [A

b]. To do so for this example, enter

rref([A b])

ans =

1.0000 0 2.2857 0

0 1.0000 1.5714 0

0 0 0 1.0000Since the bottom row contains all zeros except for the last entry, the equation does not

have a solution. In this case, pinv(A) returns a least-squares

solution.

Overdetermined Systems

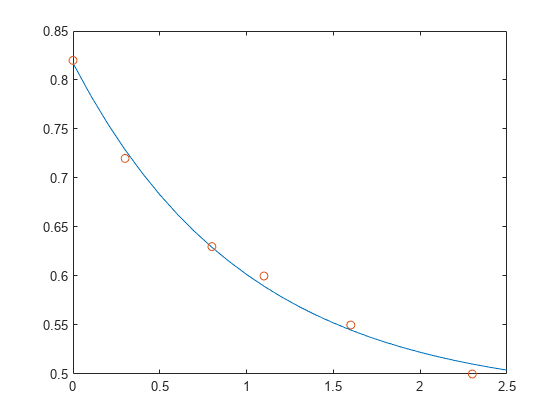

This example shows how overdetermined systems are often encountered in various kinds of curve fitting to experimental data.

A quantity y is measured at several different values of time t to produce the following observations. You can enter the data and view it in a table with the following statements.

t = [0 .3 .8 1.1 1.6 2.3]'; y = [.82 .72 .63 .60 .55 .50]'; B = table(t,y)

B=6×2 table

t y

___ ____

0 0.82

0.3 0.72

0.8 0.63

1.1 0.6

1.6 0.55

2.3 0.5

Try modeling the data with a decaying exponential function

.

The preceding equation says that the vector y should be approximated by a linear combination of two other vectors. One is a constant vector containing all ones and the other is the vector with components exp(-t). The unknown coefficients, and , can be computed by doing a least-squares fit, which minimizes the sum of the squares of the deviations of the data from the model. There are six equations in two unknowns, represented by a 6-by-2 matrix.

E = [ones(size(t)) exp(-t)]

E = 6×2

1.0000 1.0000

1.0000 0.7408

1.0000 0.4493

1.0000 0.3329

1.0000 0.2019

1.0000 0.1003

Use the backslash operator to get the least-squares solution.

c = E\y

c = 2×1

0.4760

0.3413

In other words, the least-squares fit to the data is

The following statements evaluate the model at regularly spaced increments in t, and then plot the result together with the original data:

T = (0:0.1:2.5)'; Y = [ones(size(T)) exp(-T)]*c; plot(T,Y,'-',t,y,'o')

E*c is not exactly equal to y, but the difference might well be less than measurement errors in the original data.

A rectangular matrix A is rank deficient if it does not have linearly independent columns. If A is rank deficient, then the least-squares solution to AX = B is not unique. A\B issues a warning if A is rank deficient and produces a least-squares solution. You can use lsqminnorm to find the solution X that has the minimum norm among all solutions.

Underdetermined Systems

This example shows how the solution to underdetermined systems is not unique. Underdetermined linear systems involve more unknowns than equations. The matrix left division operation in MATLAB finds a basic least-squares solution, which has at most m nonzero components for an m-by-n coefficient matrix.

Here is a small, random example:

R = [6 8 7 3; 3 5 4 1] rng(0); b = randi(8,2,1)

R =

6 8 7 3

3 5 4 1

b =

7

8 The linear system Rp = b involves two equations in four unknowns. Since the coefficient matrix contains small integers, it is appropriate to use the format command to display the solution in rational format. The particular solution is obtained with

format rat

p = R\b

p =

0

17/7

0

-29/7 One of the nonzero components is p(2) because R(:,2) is the column of R with largest norm. The other nonzero component is p(4) because R(:,4) dominates after R(:,2) is eliminated.

The complete general solution to the underdetermined system can be characterized by adding p to an arbitrary linear combination of the null space vectors, which can be found using the null function with an option requesting a rational basis.

Z = null(R,'r')

Z =

-1/2 -7/6

-1/2 1/2

1 0

0 1 It can be confirmed that R*Z is zero and that the residual R*x - b is small for any vector x, where

x = p + Z*q

Since the columns of Z are the null space vectors, the product Z*q is a linear combination of those vectors:

To illustrate, choose an arbitrary q and construct x.

q = [-2; 1]; x = p + Z*q;

Calculate the norm of the residual.

format short

norm(R*x - b)ans = 2.6645e-15

When infinitely many solutions are available, the solution with minimum norm is of particular interest. You can use lsqminnorm to compute the minimum-norm least-squares solution. This solution has the smallest possible value for norm(p).

p = lsqminnorm(R,b)

p =

-207/137

365/137

79/137

-424/137 Solving for Several Right-Hand Sides

Some problems are concerned with solving linear systems that have the same coefficient matrix A, but different right-hand sides b. When the different values of b are available at the same time, you can construct b as a matrix with several columns and solve all of the systems of equations at the same time using a single backslash command: X = A\[b1 b2 b3 …].

However, sometimes the different values of b are not all available at the same time, which means you need to solve several systems of equations consecutively. When you solve one of these systems of equations using slash (/) or backslash (\), the operator factorizes the coefficient matrix A and uses this matrix decomposition to compute the solution. However, each subsequent time you solve a similar system of equations with a different b, the operator computes the same decomposition of A, which is a redundant computation.

The solution to this problem is to precompute the decomposition of A, and then reuse the factors to solve for the different values of b. In practice, however, precomputing the decomposition in this manner can be difficult since you need to know which decomposition to compute (LU, LDL, Cholesky, and so on) as well as how to multiply the factors to solve the problem. For example, with LU decomposition you need to solve two linear systems to solve the original system Ax = b:

[L,U] = lu(A); x = U \ (L \ b);

Instead, the recommended method for solving linear systems with several consecutive right-hand sides is to use decomposition objects. These objects enable you to leverage the performance benefits of precomputing the matrix decomposition, but they do not require knowledge of how to use the matrix factors. You can replace the previous LU decomposition with:

dA = decomposition(A,'lu');

x = dA\b;If you are unsure which decomposition to use, decomposition(A) chooses the correct type based on the properties of A, similar to what backslash does.

Here is a simple test of the possible performance benefits of this approach. The test solves the same sparse linear system 100 times using both backslash (\) and decomposition.

n = 1e3; A = sprand(n,n,0.2) + speye(n); b = ones(n,1); % Backslash solution tic for k = 1:100 x = A\b; end toc

Elapsed time is 9.006156 seconds.

% decomposition solution tic dA = decomposition(A); for k = 1:100 x = dA\b; end toc

Elapsed time is 0.374347 seconds.

For this problem, the decomposition solution is much faster than using backslash alone, yet the syntax remains simple.

Iterative Methods

If the coefficient matrix A is large and sparse, factorization methods are generally not efficient. Iterative methods generate a series of approximate solutions. MATLAB provides several iterative methods to handle large, sparse input matrices.

| Function | Description |

|---|---|

pcg | Preconditioned conjugate gradients method. This method is appropriate for Hermitian positive definite coefficient matrix A. |

bicg | BiConjugate Gradients Method |

bicgstab | BiConjugate Gradients Stabilized Method |

bicgstabl | BiCGStab(l) Method |

cgs | Conjugate Gradients Squared Method |

gmres | Generalized Minimum Residual Method |

lsqr | LSQR Method |

minres | Minimum Residual Method. This method is appropriate for Hermitian coefficient matrix A. |

qmr | Quasi-Minimal Residual Method |

symmlq | Symmetric LQ Method |

tfqmr | Transpose-Free QMR Method |

Multithreaded Computation

MATLAB supports multithreaded computation for a number of linear algebra and element-wise numerical functions. These functions automatically execute on multiple threads. For a function or expression to execute faster on multiple CPUs, a number of conditions must be true:

The function performs operations that easily partition into sections that execute concurrently. These sections must be able to execute with little communication between processes. They should require few sequential operations.

The data size is large enough so that any advantages of concurrent execution outweigh the time required to partition the data and manage separate execution threads. For example, most functions speed up only when the array contains several thousand elements or more.

The operation is not memory-bound; processing time is not dominated by memory access time. As a general rule, complicated functions speed up more than simple functions.

inv, lscov, linsolve, and mldivide show significant increase in speed on large double-precision arrays (on order of 10,000 elements or more) when multithreading is enabled.

See Also

mldivide | mrdivide | pinv | decomposition | lsqminnorm