Deploying Generated Code on AWS GPUs for Deep Learning

In this video, you’ll walk through a general workflow for deploying generated CUDA® code from a deep learning example in MATLAB® to the cloud.

MATLAB provides a complete integrated workflow for engineers and scientists to explore, prototype, and deploy deep learning algorithms in a familiar development environment with built-in higher-level apps and libraries.

Using GPU Coder™, you can generate CUDA code for the complete deep learning application which includes the pre-processing and post-processing logic around a trained network and deploy to any cloud platform like AWS®, Microsoft Azure®, etc.

Published: 24 May 2019

Hello, my name is Sarat and today I’ll be showing you how you can generate CUDA code using GPU Coder, deploy it to Amazon Web Services, and have a simple web application interact with the generated code.

Before I jump into the actual implementation, here’s the result of the demo.

A simple web application that runs on a CUDA executable created using GPU Coder and allows users to upload an image and classify it using a pre-trained deep learning network: alexnet.

I can use this picture showing peppers from our documentation page, upload it to the server, and click on predict to get the classification output from Alexnet.

Here’s an overview of the demo. We are generating CUDA code using GPU Coder. We are then uploading the generated source code to an Amazon S3 bucket and deploying it to an EC2 instance or virtual machine using Amazon Codedeploy.

Once we have the source code on the EC2 instance, we can build an executable using the NVIDIA CUDA compiler.

I will explain each of these steps in greater detail in a few minutes.

To begin with, we will use a MATLAB function from which CUDA code can then be generated.

This is a MATLAB function that I have written by modifying a deep learning example from our GPU Coder documentation. You can find a link to this page below.

In this function, we are reading an image using the filename input, pre-processing the image and feeding it to the pre-trained network ‘alexnet’ and then returning the classification output.

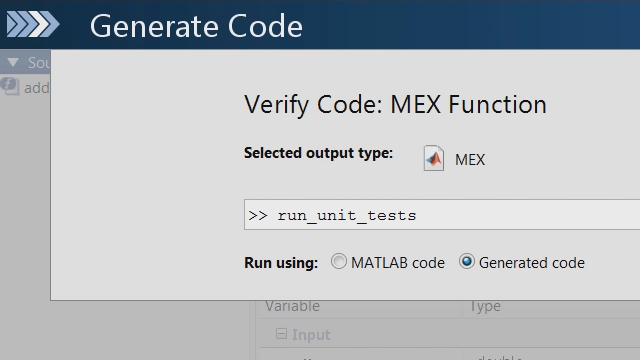

Once we have created a MATLAB function, we can use a separate script to generate either a static library, a dynamic library, or an executable. In this case we are generating a static library.

Additionally, we are also using the packNGo function to package the dependencies for the ‘imread’ function.

Running the codegen command in this case will give you a zip folder containing all the dependencies and a codegen folder containing the generated code.

Extract and copy all files in the zip folder to the codegen directory. The generated code will contain a template main.cu file and a corresponding header file.

We need to modify these files to accept a filename as input. After making the required changes to the main file, we can deploy the generated code to the cloud.

If you want, you can also integrate this application with your existing code using this main file.

Moving on to the AWS part of the workflow:

For this demo, I am using this AMI from the Amazon Marketplace since it provides all the CUDA libraries required to run the generated code on a GPU.

We also have a file exchange post describing some of the steps in greater detail.

By following the steps there, you can configure all the AWS components needed for this demo.

Once you have created an EC2 instance and an S3 bucket, we are ready to upload the codegen directory to the S3 bucket.

We also need a YAML file that specifies the source and destination paths for the source code.

We’ll then use the AWS command line interface to upload the files.

We have successfully uploaded the files to Amazon S3.

We’ll now use codedeploy to deploy our source code to an EC2 instance.

Using S3 with codedeploy will allow us to maintain versions or revisions of our source code and have a continuous deployment pipeline.

Assuming that you have already configured Codedeploy, we will create a deployment for the application in codedeploy.

We’ll select S3 here, enter the path to the S3 bucket, and click create deployment.

We now have a codegen directory on our newly created EC2 instance, in the path mentioned in the Codedeploy YAML file.

We’ll use the deployed source code to build an executable.

In order to do this, we’ll update the path to the source code in the make file and run it.

We’ll then use the NVIDIA CUDA compiler to build an executable from this static library.

You now have a CUDA executable that runs on a GPU in the cloud and allows you to classify images using alexnet.

You can follow along the steps outlined in this video by visiting the File Exchange link below.