Pick-and-Place Workflow Using Stateflow for MATLAB

This example shows how to set up a pick-and-place workflow for a robotic manipulator like the KINOVA® Gen3.

The pick-and-place workflow implemented in this example can be adapted to different scenarios, planners, simulation platforms, and object detection options. The example shown here uses Model Predictive Control for planning and control, and simulates the robot in MATLAB. For other uses, see:

Overview

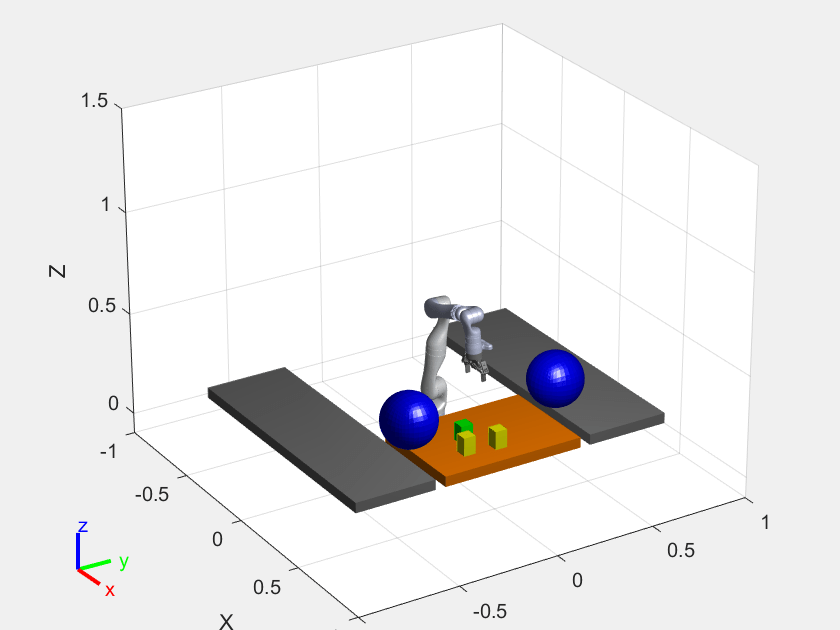

This example sorts detected objects and places them on benches using a KINOVA Gen3 manipulator. The example uses tools from four toolboxes:

Robotics System Toolbox™ is used to model, simulate, and visualize the manipulator, and for collision-checking.

Model Predictive Control Toolbox™ and Optimization Toolbox™ are used to generated optimized, collision-free trajectories for the manipulator to follow.

Stateflow® is used to schedule the high-level tasks in the example and step from task to task.

This example builds on key concepts from two related examples:

Plan and Execute Task- and Joint-Space Trajectories Using Kinova Gen3 Manipulator shows how to generate and simulate interpolated joint trajectories to move from an initial to a desired end-effector pose.

Plan and Execute Collision-Free Trajectories Using KINOVA Gen3 Manipulator shows how to plan closed-loop collision-free robot trajectories to a desired end-effector pose using nonlinear model predictive control.

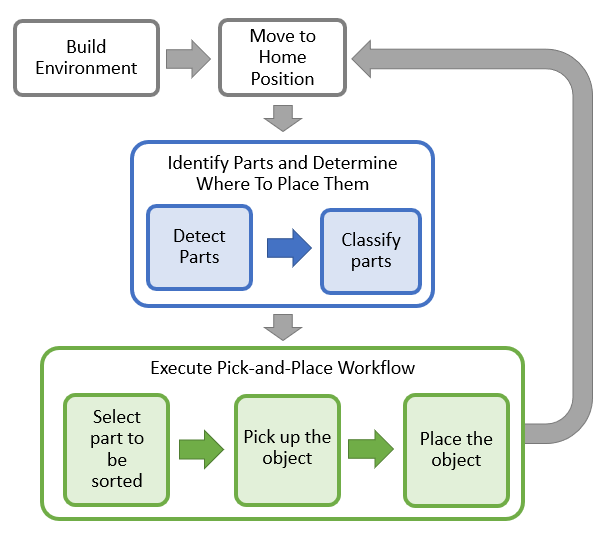

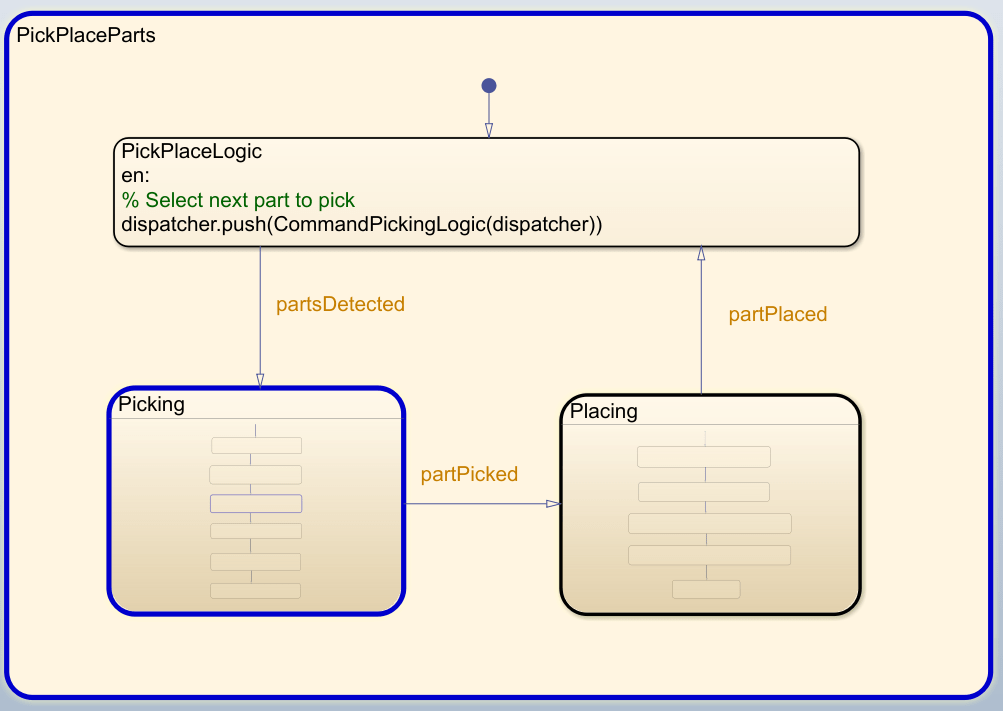

Stateflow Chart

This example uses a Stateflow chart to schedule tasks in the example. Open the chart to examine the contents and follow state transitions during chart execution.

edit exampleHelperFlowChartPickPlace.sfxThe chart dictates how the manipulator interacts with the objects, or parts. It consists of basic initialization steps, followed by two main sections:

Identify Parts and Determine Where to Place Them

Execute Pick-and-Place Workflow

Initialize the Robot and Environment

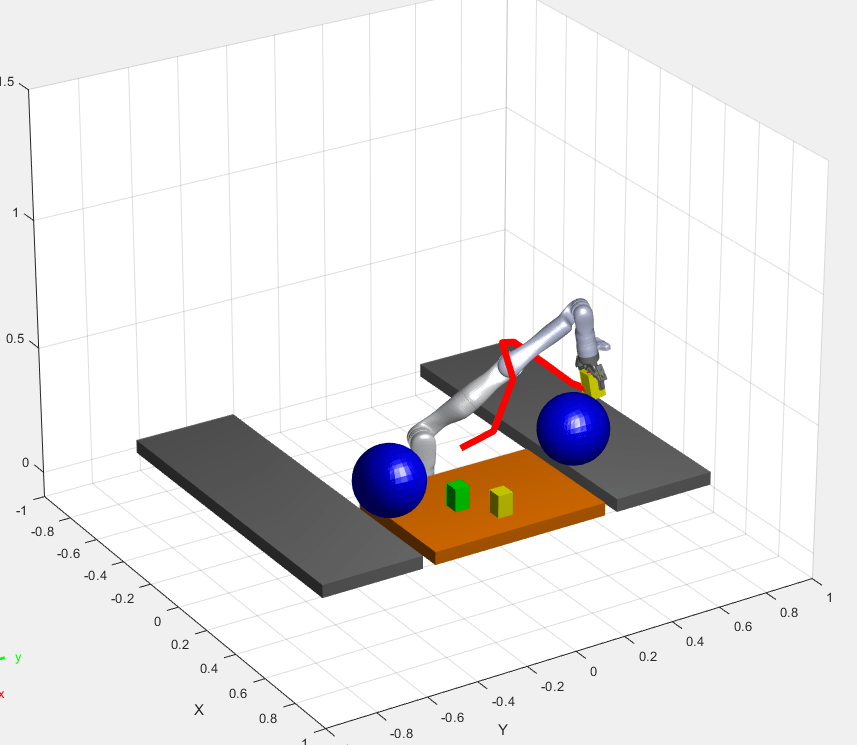

First, the chart creates an environment consisting of the Kinova Gen3 manipulator, three parts to be sorted, the shelves used for sorting, and a blue obstacle. Next, the robot moves to the home position.

Identify the Parts and Determine Where to Place Them

In the first step of the identification phase, the parts must be detected. The exampleCommandDetectParts function directly gives the object poses. Replace this class with your own object detection algorithm based on your sensors or objects.

Next, the parts must be classified. The exampleCommandClassifyParts function classifies the parts into two types to determine where to place them (top or bottom shelf). Again, you can replace this function with any method for classifying parts.

Execute Pick-and-Place Workflow

Once parts are identified and their destinations have been assigned, the manipulator must iterate through the parts and move them onto the appropriate tables.

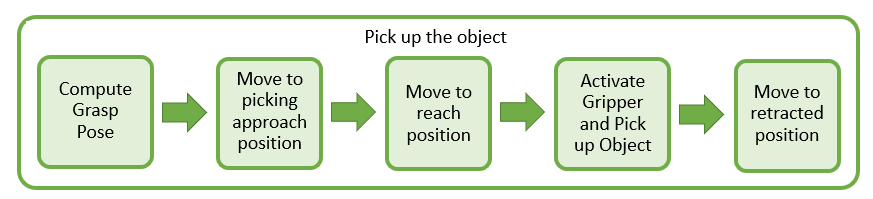

Pick up the Object

The picking phase moves the robot to the object, picks it up, and moves to a safe position, as shown in the following diagram:

The exampleCommandComputeGraspPose function computes the grasp pose. The class computes a task-space grasping position for each part. Intermediate steps for approaching and reaching towards the part are also defined relative to the object.

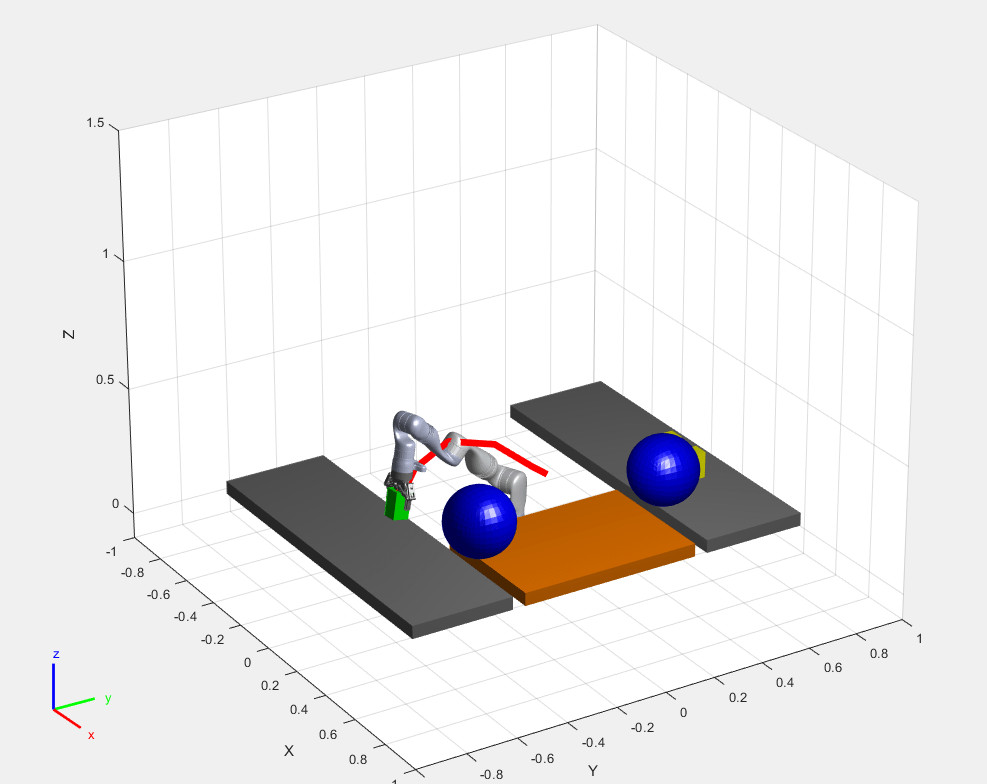

This robot picks up objects using a simulated gripper. When the gripper is activated, exampleCommandActivateGripper adds the collision mesh for the part onto the rigidBodyTree representation of the robot, which simulates grabbing it. Collision detection includes this object while it is attached. Then, the robot moves to a retracted position away from the other parts.

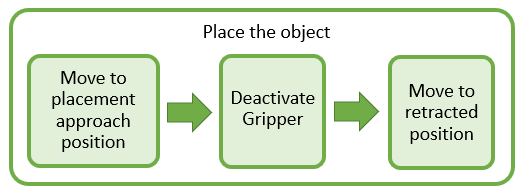

Place the Object

The robot then places the object on the appropriate shelf.

As with the picking workflow, the placement approach and retracted positions are computed relative to the known desired placement position. The gripper is deactivated using exampleCommandActivateGripper, which removes the part from the robot.

Moving the Manipulator to a Specified Pose

Most of the task execution consists of instructing the robot to move between different specified poses. The exampleHelperPlanExecuteTrajectoryPickPlace function defines a solver using a nonlinear model predictive controller (see Nonlinear MPC (Model Predictive Control Toolbox)) that computes a feasible, collision-free optimized reference trajectory using nlmpcmove (Model Predictive Control Toolbox) and checkCollision. The obstacles are represented as spheres to ensure the accurate approximation of the constraint Jacobian in the definition of the nonlinear model predictive control algorithm (see [1]). The helper function then simulates the motion of the manipulator under computed-torque control as it tracks the reference trajectory using the jointSpaceMotionModel object, and updates the visualization. The helper function is called from the Stateflow chart via exampleCommandMoveToTaskConfig, which defines the correct inputs.

This workflow is examined in detail in Plan and Execute Collision-Free Trajectories Using KINOVA Gen3 Manipulator. The controller is used to ensure collision-free motion. For simpler trajectories where the paths are known to be obstacle-free, trajectories could be executed using trajectory generation tools and simulated using the manipulator motion models. See Plan and Execute Task- and Joint-Space Trajectories Using Kinova Gen3 Manipulator.

Task Scheduling in a Stateflow Chart

This example uses a Stateflow chart to direct the workflow in MATLAB®. For more info on creating state flow charts, see Create Stateflow Charts for Execution as MATLAB Objects (Stateflow).

The Stateflow chart directs task execution in MATLAB by using command functions. When the command finishes executing, it sends an input event to wake up the chart and proceed to the next step of the task execution, see Execute a Standalone Chart (Stateflow).

Run and Visualize the Simulation

This simulation uses a KINOVA Gen3 manipulator with a Robotiq gripper. Load the robot model from a .mat file as a rigidBodyTree object.

load('exampleHelperKINOVAGen3GripperColl.mat'); Initialize the Pick and Place Coordinator

Set the initial robot configuration. Create the coordinator, which handles the robot control, by giving the robot model, initial configuration, and end-effector name.

currentRobotJConfig = homeConfiguration(robot);

coordinator = exampleHelperCoordinatorPickPlace(robot,currentRobotJConfig, "gripper");Specify the home configuration and two poses for placing objects of different types.

coordinator.HomeRobotTaskConfig = trvec2tform([0.4, 0, 0.6])*axang2tform([0 1 0 pi]);

coordinator.PlacingPose{1} = trvec2tform([0.23 0.62 0.33])*axang2tform([0 1 0 pi]);

coordinator.PlacingPose{2} = trvec2tform([0.23 -0.62 0.33])*axang2tform([0 1 0 pi]);Run and Visualize the Simulation

Connect the coordinator to the Stateflow Chart. Once started, the Stateflow chart is responsible for continuously going through the states of detecting objects, picking them up and placing them in the correct staging area.

coordinator.FlowChart = exampleHelperFlowChartPickPlace('coordinator', coordinator); Use a dialog to start the pick-and-place task execution. Click Yes in the dialog to begin the simulation.

answer = questdlg('Do you want to start the pick-and-place job now?', ... 'Start job','Yes','No', 'No'); switch answer case 'Yes' % Trigger event to start Pick and Place in the Stateflow Chart coordinator.FlowChart.startPickPlace; case 'No' % End Pick and Place coordinator.FlowChart.endPickPlace; delete(coordinator.FlowChart); delete(coordinator); end

Ending the Pick-and-Place task

The Stateflow chart will finish executing automatically after 3 failed attempts to detect new objects. To end the pick-and-place task prematurely, uncomment and execute the following lines of code or press Ctrl+C in the command window.

% coordinator.FlowChart.endPickPlace; % delete(coordinator.FlowChart); % delete(coordinator);

Observe the Simulation States

During execution, the active states at each point in time are highlighted in blue in the Stateflow chart. This helps keeping track of what the robot does and when. You can click through the subsystems to see the details of the state in action.

Visualize the Pick-and-Place Action

The example uses interactiveRigidBodyTree for robot visualization. The visualization shows the robot in the working area as it moves parts around. The robot avoids obstacles in the environment (blue cylinder) and places objects on top or bottom shelf based on their classification. The robot continues working until all parts have been placed.

References

[1] Schulman, J., et al. "Motion planning with sequential convex optimization and convex collision checking." The International Journal of Robotics Research 33.9 (2014): 1251-1270.