Using Deep Learning to Automate Radar Data Quality Control at Sea

By Dr. Rune Gangeskar, Miros

For ships at sea, accurate measurements of ocean waves, currents, and speed through water are valuable for a wide variety of tasks, including fuel optimization and navigation in constrained areas. Even small errors in speed-through-water measurements, for example, can result in large errors in calculations of vessel performance and increased fuel usage by tens of tons each day. Traditionally, speed through water has been measured via underwater speed log instruments, which estimate speed using the difference in water pressure on the ship’s hull (pitometer log), through the Doppler shift of a sonar signal (Doppler velocity log), or through the signal generated by the interaction between an energized coil and the moving water body (electromagnetic log). These systems can be costly to maintain and tend to be susceptible to bubbles, turbulence, or other disturbances caused by the ship’s motion.

Here at Miros, we designed a sensor system called Wavex that accurately measures waves, current, and speed through water. The system processes digitized images from conventional marine X-band navigation radars, eliminating the disturbance issues and maintenance overhead associated with underwater sensors. We further increased the performance and reliability of Wavex by using a deep learning network to automatically identify radar images taken under poor measurement conditions, such as heavy precipitation (Figure 1).

In the case of rain showers, we can disregard the disturbed areas of the radar image and use only the undisturbed areas to obtain measurements. The network we created using MATLAB® and Deep Learning Toolbox™ accurately identifies precipitation with greater than 97% accuracy and wind drops with greater than 99% accuracy.

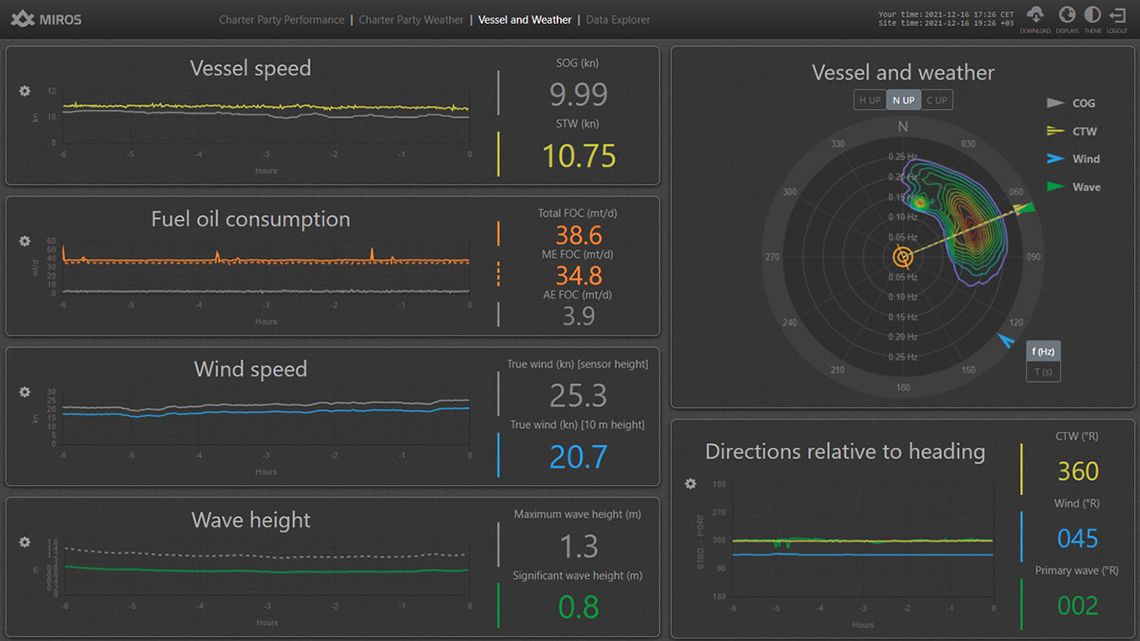

Unlike traditional image processing algorithms that would have required calibration for different measurement conditions, geometries, and radar types, the deep learning network we designed in MATLAB is highly accurate across a wide range of measurement scenarios, without requiring adjustment or calibration. Once we trained and validated the network in MATLAB, we used MATLAB Compiler™ to deploy it as a standalone application into a Wavex system that provides near real-time measurements of speed through water, current, scaled directional wave spectra, and integrated wave parameters such as wave height (Figure 2).

Radar-Based Sea State Measurements and the Effects of Wind and Rain

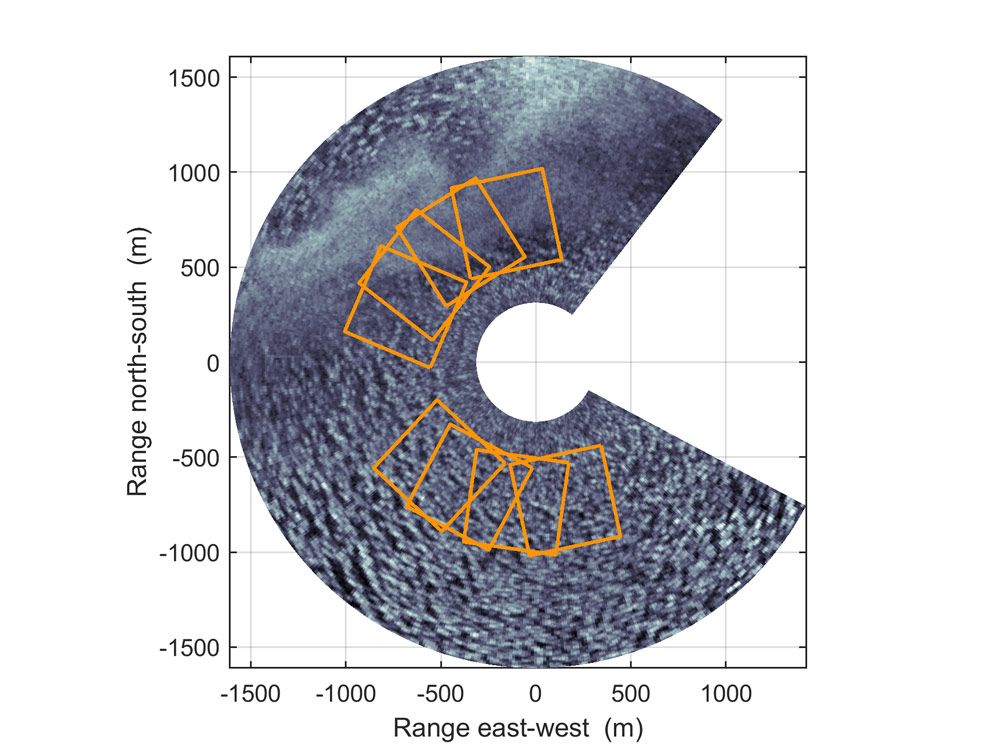

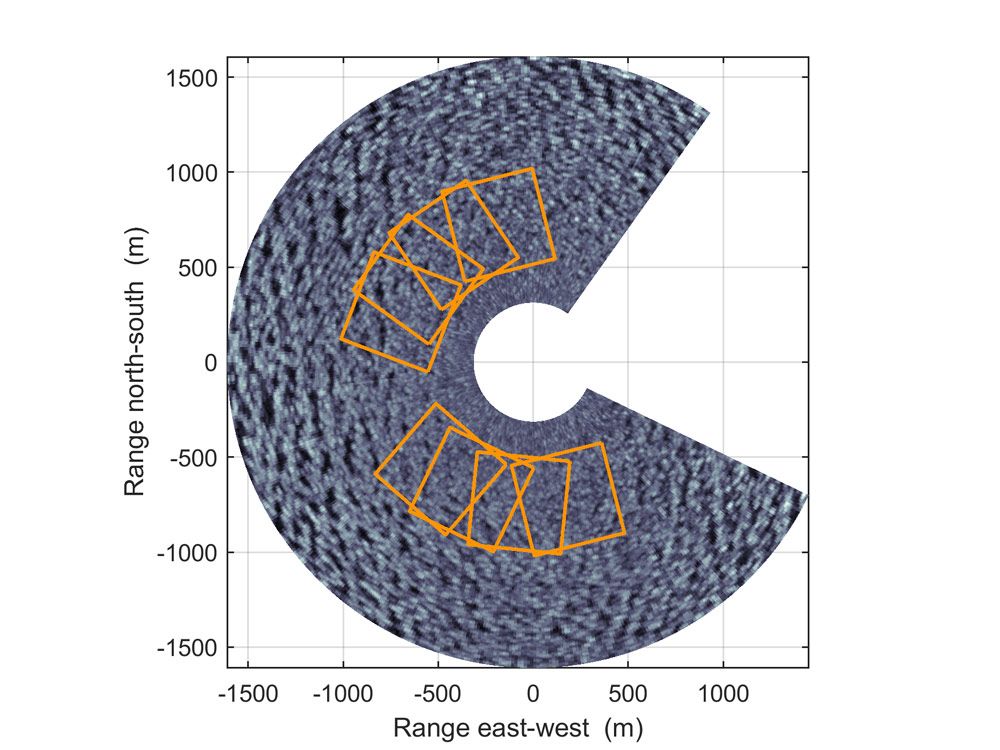

A typical marine X-band radar antenna rotates at a rate of 15 to 48 revolutions per minute, generating digitized images in which wave patterns are clearly visible (Figure 3). Wavex systems extract Cartesian image sections from the digitized images and then process these sections using algorithms developed in MATLAB. The algorithms apply noise filtering and perform 3D fast Fourier transforms (FFTs) on time series of the Cartesian images, producing 3D wave spectra with information about the power present at various wavenumbers and frequencies. The algorithms then use the wavenumber-frequency spectra to estimate currents and speed through water, as well as scaled wave spectra and integrated wave parameters.

Certain environmental conditions, such as low wind speeds and rain showers, cause distortions in the digitized images, making it difficult to extract meaningful information (Figure 4). Our goal with deep learning was to create a network that automatically identified those Cartesian sections that were too distorted to be used for various sea state measurements.

Applying Deep Learning for Image Classification

The first step in applying deep learning to an image classification problem was to acquire and label images with combinations of these characteristics to train the network. For this purpose, we collected a set of more than 7 million Cartesian image sections from six different Wavex systems over the span of more than a decade. We labeled each image section as being from one of five categories: no wind speed drop or precipitation, notable precipitation, notable wind drop, notable precipitation and wind drop, and unclassified. To reduce the required effort and make the labeling practically feasible, we used a combination of visual evaluation and automated labeling using available data from other sources, such as wind data collected from shipboard sensors.

Like others on my team, although I had experience with MATLAB and some of the more classic topics of machine learning, I had no previous experience with deep learning applications. I started with the Deep Learning Toolbox tutorials and examples of using simple convolutional neural networks for deep learning image classification. As a first step, I tried some of the pretrained models, but soon found that I could get better results by building my own deep learning networks based on code examples I had seen. I experimented with various network configurations until settling on one with 23 layers. The network had a fairly standard structure. The image input layer is followed by five groups, each with a 2D convolutional layer, a batch normalization layer, a rectified linear unit (ReLU) layer, and a max pooling layer. In the final group, a fully connected layer is used in place of the max pooling layer. This group is followed by a softmax layer and the classification output layer (Figure 5).

At first, I trained the network using data from individual Wavex systems, then confirmed that it could accurately classify images from the other systems. Later, I trained it using images from all the systems together to improve accuracy across all radar types and operating conditions. I then experimented with changes to the network to further improve its accuracy. For example, I tried different sizes for the first convolutional layers, various network depths, and different normalizations on the image input layer.

Deployment and Future Plans

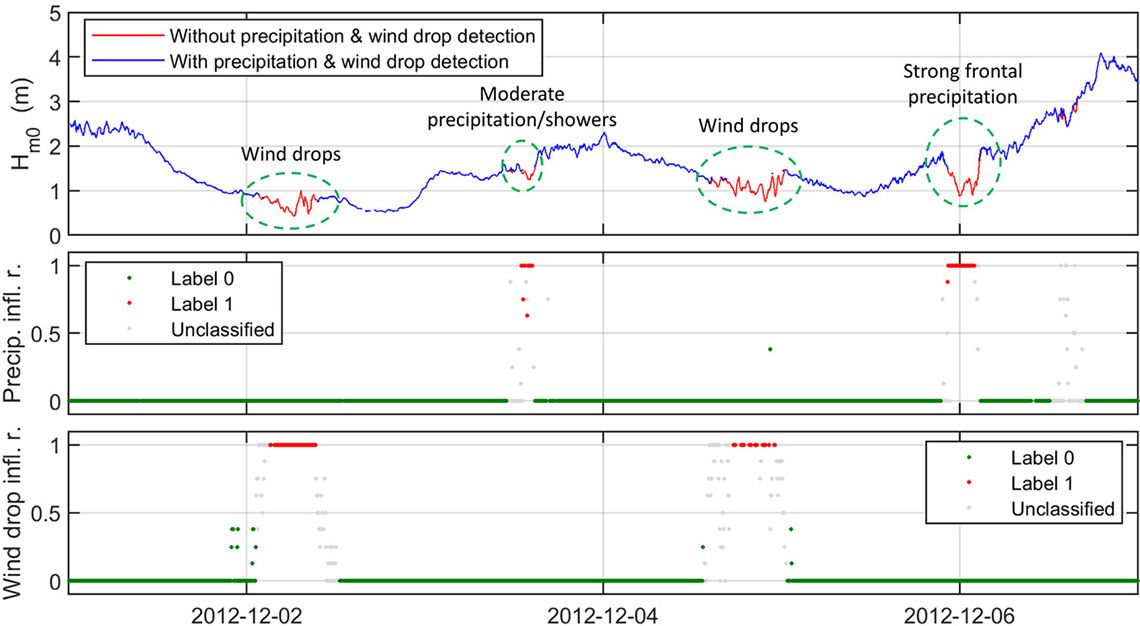

To integrate the final trained network and algorithms into Wavex systems, I used MATLAB Compiler to generate a standalone application. This enabled us to rapidly transition our R&D effort—the model development and training—into a production environment for automated quality control. The resulting application scans every Cartesian image section extracted from the polar images generated by the onboard radar system, classifies them, and stores the results along with all other measurements in a database that the Wavex software accesses. After completing this integration, I used MATLAB visualizations to verify the system performance under various conditions, comparing the performance of the system when using automated wind drop and precipitation detection against a baseline in which the detection was disabled. Figure 6 shows an example from an eventful period of how the deep learning-based detection accurately distinguishes between various situations and correctly tags data, allowing for optimized processing and improved information flow to the user.

The standalone deep learning application is now being tested in production Wavex systems on several ships. Currently my team is working on applying similar deep learning approaches for image and signal classification for a couple of new and different applications.

Published 2022